StarWind Virtual HCI Appliance: Configuration Guide for Proxmox Virtual Environment

- November 20, 2024

- 26 min read

Annotation

Relevant products

StarWind Virtual HCI Appliance (VHCA)

Purpose

This document outlines how to configure a StarWind Virtual HCI Appliance (VHCA) based on Proxmox Virtual Environment, with VSAN running as a Controller Virtual Machine (CVM). The guide includes steps to prepare Proxmox VE hosts for clustering, configure physical and virtual networking, and set up the Virtual SAN Controller Virtual Machine.

Audience

This technical guide is intended for storage and virtualization architects, system administrators, and partners designing virtualized environments using StarWind Virtual HCI Appliance (VHCA).

Expected result

The end result of following this guide will be a fully configured high-availability StarWind Virtual HCI Appliance (VHCA) powered by Microsoft Windows Server that includes virtual machine shared storage provided by StarWind VSAN.

Prerequisites

Prior to configuring StarWind Virtual HCI Appliance (VHCA), please make sure that the system meets the requirements, which are available via the following link:

https://www.starwindsoftware.com/system-requirements

Recommended RAID settings for HDD and SSD disks:

https://knowledgebase.starwindsoftware.com/guidance/recommended-raid-settings-for-hdd-and-ssd-disks/

Please read StarWind Virtual SAN Best Practices document for additional information:

https://www.starwindsoftware.com/resource-library/starwind-virtual-san-best-practices

Solution Diagram:

Prerequisites:

1. 2 servers with local storage, which have direct network connections for Synchronization and iSCSI/StarWind heartbeat traffic.

2. Servers should have local storage available for Microsoft Windows Server and StarWind VSAN Controller Virtual Machine. CVM utilizes local storage to create replicated shared storage connected to Proxmox VE nodes via iSCSI.

3. StarWind HA devices require at least 2 separate network links between the nodes. The first one is used for iSCSI traffic, the second one is used for Synchronization traffic.

NOTE. The network interfaces on each node for Synchronization and iSCSI/StarWind heartbeat interfaces should be in different subnets and connected directly according to the network diagram above. Here, the 172.16.10.x subnet is used for the iSCSI/StarWind heartbeat traffic, while the 172.16.20.x subnet is used for the Synchronization traffic.

4. 2-nodes cluster requires quorum. iSCSI/SMB/NFS cannot be used for this purposes. QDevice-Net package must be installed on 3rd Linux server, which will act as a witness.

https://pve.proxmox.com/wiki/Cluster_Manager#_corosync_external_vote_support

Hardware Configuration

Access the BIOS on each server:

1. Change “Boot mode select” to [UEFI]

2. Enable AC Power Recovery to On;

3. Set System Profile Settings to Performance;

4. Disable Patrol Read in case of SSD disks;

5. Enable SR-IOV for network cards;

6. Configure the storage for OS and for data, or single RAID for OS and Data according to Supported RAID configurations here.

Settings for OS RAID1:

Virtual disk name: OS

Disk cache policy: Default (enabled by default)

Write policy: Write Through

Read policy: No read ahead

Stripe Size: 64K

Storage for data:

Supported RAID configurations for main data storage you can find here.

Deploying Proxmox VE

1. Download Proxmox VE:

https://www.proxmox.com/en/downloads

2. Boot from the downloaded ISO.

3. Press I agree to accept EULA.

4. Choose harddisk for PVE installation. Click Next

5. Choose Time Zone. Click Next

6. Set Administration Password and Email Address. Click Next

7. Configure Management Network. Click Next

8. Verify settings. Click Install

Preconfiguring Proxmox VE hosts

1. ProxMox cluster should be created before deploying any virtual machines.

2. 2-nodes cluster requires quorum. iSCSI/SMB/NFS cannot be used for this purposes. QDevice-Net package must be installed on 3rd Linux server, which will act as a witness.

https://pve.proxmox.com/wiki/Cluster_Manager#_corosync_external_vote_support

3. Install qdevice on witness server:

|

1 |

ubuntu# apt install corosync-qnetd |

4. Install qdevice on both cluster nodes:

|

1 |

pve# apt install corosync-qdevice |

5. Configure quorum running the following command on one of the ProxMox node (change IP address)

|

1 |

pve# pvecm qdevice setup %IP_Address_Of_Qdevice% |

6. Configure network interfaces on each node to make sure that Synchronization and iSCSI/StarWind heartbeat interfaces are in different subnets and connected according to the network diagram above. In this document, 172.16.10.x subnet is used for iSCSI/StarWind heartbeat traffic, while 172.16.20.x subnet is used for the Synchronization traffic. Choose node and open System -> Network page.

7. Click Create. Choose Linux Bridge.

8. Create Linux Bridge and set IP address. Set MTU to 9000. Click Create.

9. Repeat step 8 for all network adapters, which will be used for Synchronization and iSCSI/StarWind heartbeat traffic.

10. Verify network configuration in /etc/network/interfaces file. Login to the node via SSH and check the contents of the file.

11. Enable IOMMU support in kernel, if PCIe passthourgh will be used to pass RAID Controller, HBA or NVMe drives to the VM. Update grub configuration file.

For Intel CPU:

Add “intel_iommu=on iommu=pt” to GRUB_CMDLINE_LINUX_DEFAULT line in /etc/default/grub file.

For AMD CPU:

Add “iommu=pt” to GRUB_CMDLINE_LINUX_DEFAULT line in /etc/default/grub file.

12. Reboot the host.

13. Repeat steps 6-12 an all nodes.

Deploying StarWind VSAN Controller VM

1. Download StarWind VSAN CVM KVM: VSAN by StarWind: Overview

2. Extract the VM CVM.qcow2 file from the downloaded archive.

3. Upload CVM.qcow2 file to the Proxmox Host via any SFTP client (e.g. WinSCP) to /root/ directory.

4. Create a VM without OS. Login to Proxmox host via Web GUI. Click Create VM.

5. Choose node to create VM. Enable Start at boot checkbox and set Start/Shutdown order to 1. Click Next.

6. Choose Do not use any media and choose Guest OS Linux. Click Next.

7. Specify system options. Choose Machine type q35 and check the Qemu Agent box. Click Next.

8. Remove all disks from the VM. Click Next.

9. Assign 8 cores to the VM and choose Host CPU type. Click Next.

10. Assign at least 8GB of RAM to the VM. Click Next.

11. Configure Management network for the VM. Click Next.

12. Confirm settings. Click Finish.

13. Connect to Proxmox host via SSH. Attach StarWindAppliance.qcow2 file to the VM.

|

1 |

qm importdisk 100 /root/StarWindAppliance.qcow2 local-lvm |

14. Open VM and go to Hardware page. Add unused SCSI disk to the VM.

15. Attach Network interfaces for Synchronization and iSCSI/Heartbeat traffic.

16. Open Options page of the VM. Select Boot Order and click Edit.

17. Move scsi0 device as #1 to boot from.

18. Repeat all the steps from this section on other Proxmox hosts.

Attaching storage StarWind Virtual SAN CVM

Please follow the steps below to attach desired storage type to the CVM

Attaching Virtual disk

1. Open VM Hardware page in Proxmox and add drive to the VM, which going to be used by StarWind service. Specify size of the Virtual disk and click OK.

NOTE. It is recommended to use VirtIO SCSI single controller for better performance. If multiple virtual disks are needed to be used in a software RAID inside of the CVM, VirtIO SCSI controller should be used.

2. Repeat step 1 to attach additional Virtual Disks.

3. Start VM.

4. Repeat steps 1-2 on all nodes

Attaching PCIe device

1. Shutdown StarWind VSAN CVM.

2. Open VM Hardware page in Proxmox and click Add -> PCI Device.

3. Choose PCIe Device from drop-down list.

4. Click Add.

5. Edit Memory. Uncheck Ballooning Device. Click OK.

6. Start VM.

7. Repeat steps 1-6 on all nodes.

Initial Configuration Wizard

1. Start the StarWind Virtual SAN Controller Virtual Machine.

2. Launch the VM console to view the VM boot process and obtain the IPv4 address of the Management network interface.

NOTE: If the VM does not acquire an IPv4 address from a DHCP server, use the Text-based User Interface (TUI) to set up the Management network manually.

Default credentials for TUI: user/rds123RDS

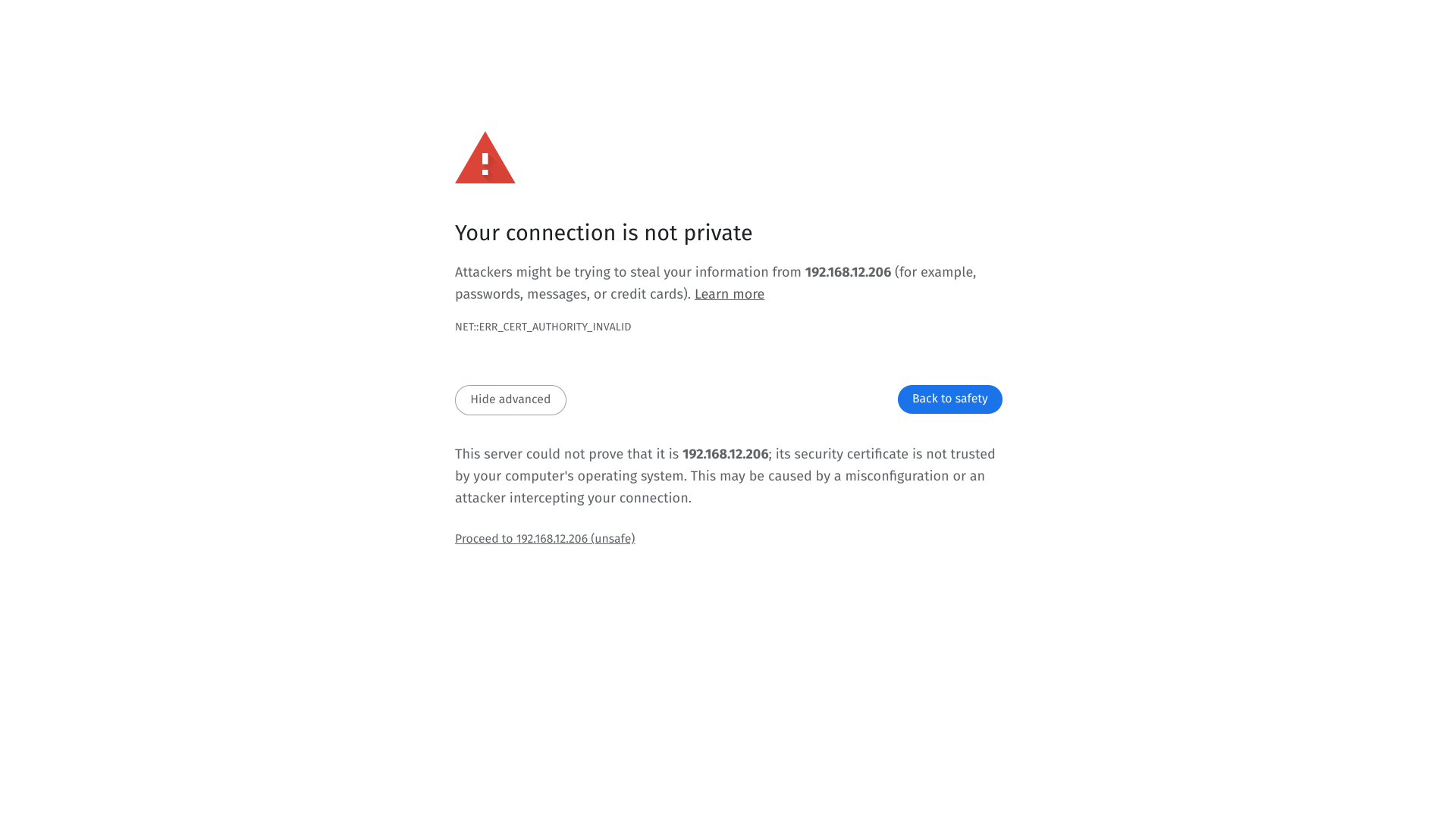

3. Using a web browser, open a new tab and enter the VM’s IPv4 address to access the StarWind VSAN Web Interface. On the Your connection is not private screen, click Advanced and then select Continue to…

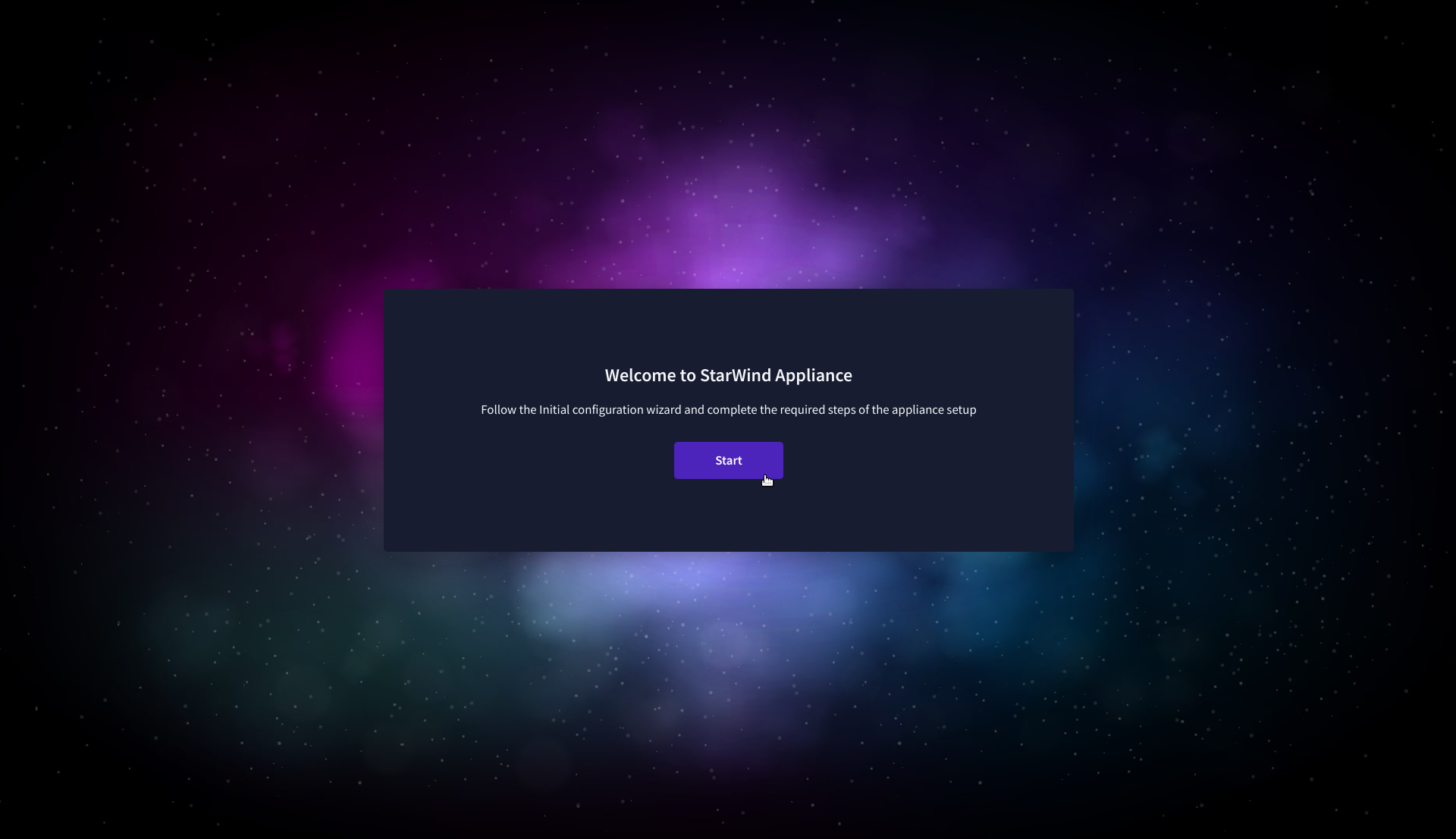

4. On the Welcome to StarWind Appliance screen, click Start to launch the Initial Configuration Wizard.

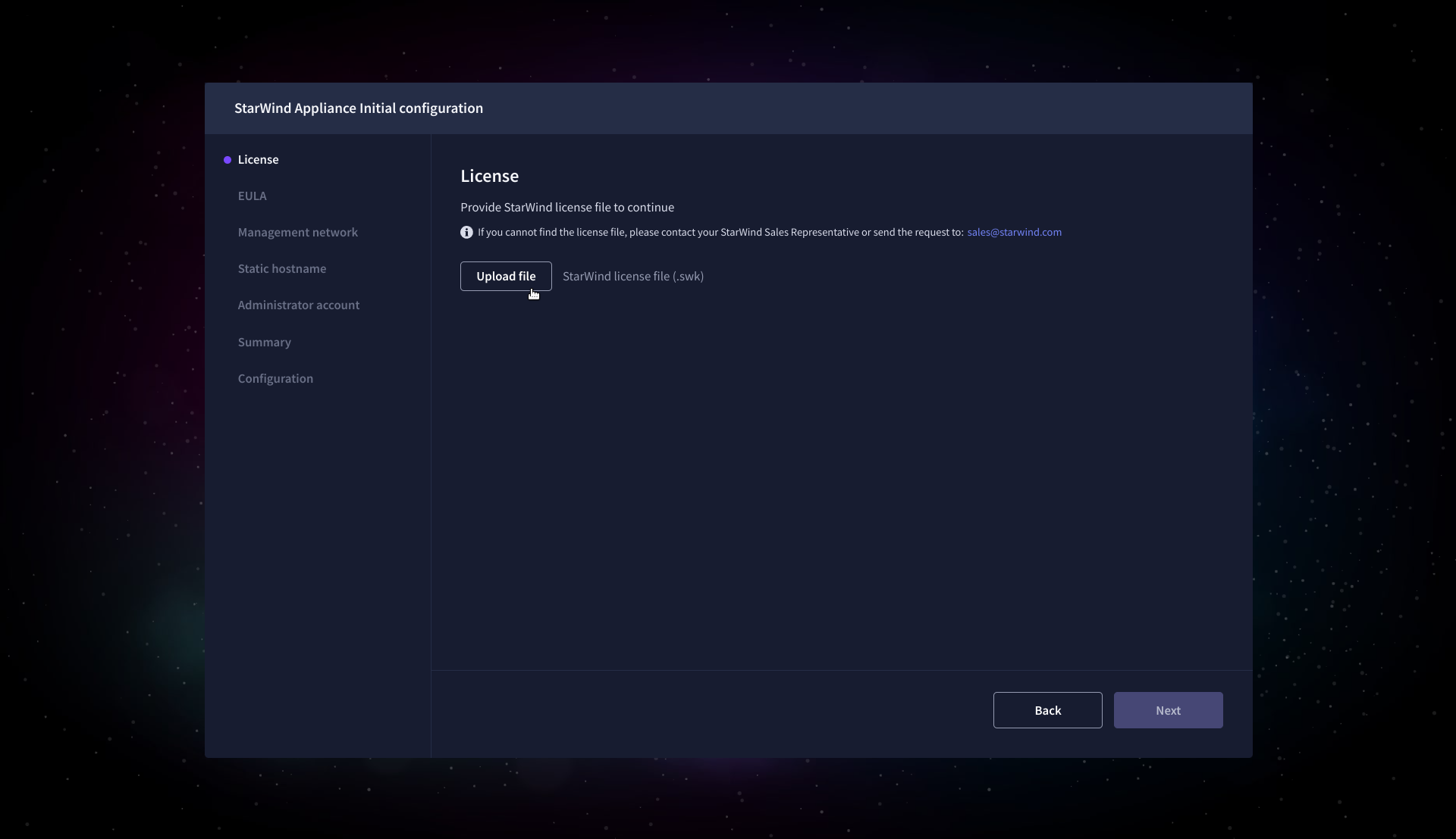

5. On the License step, upload the StarWind Virtual SAN license file.

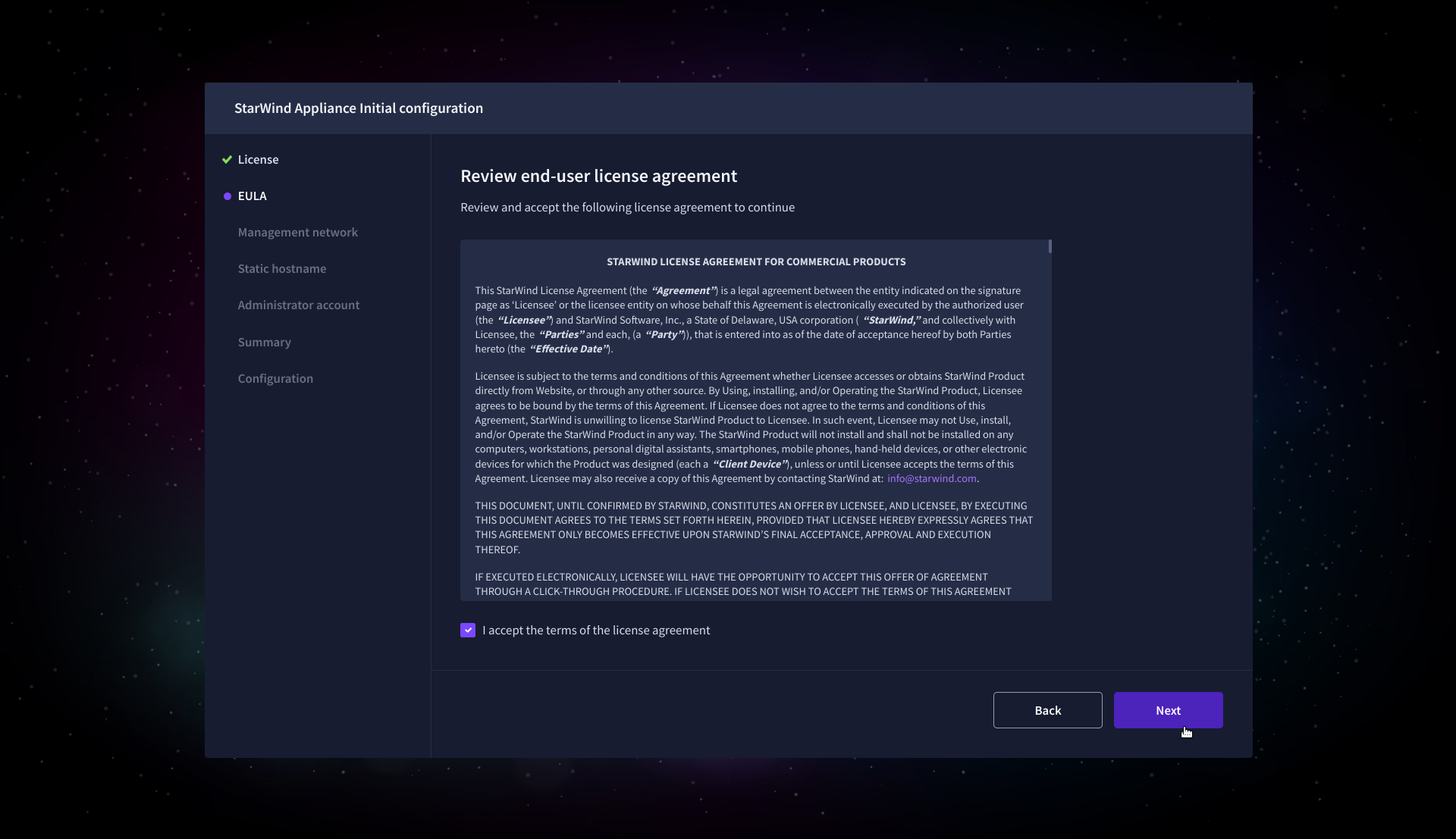

6. On the EULA step, read and accept the End User License Agreement to continue.

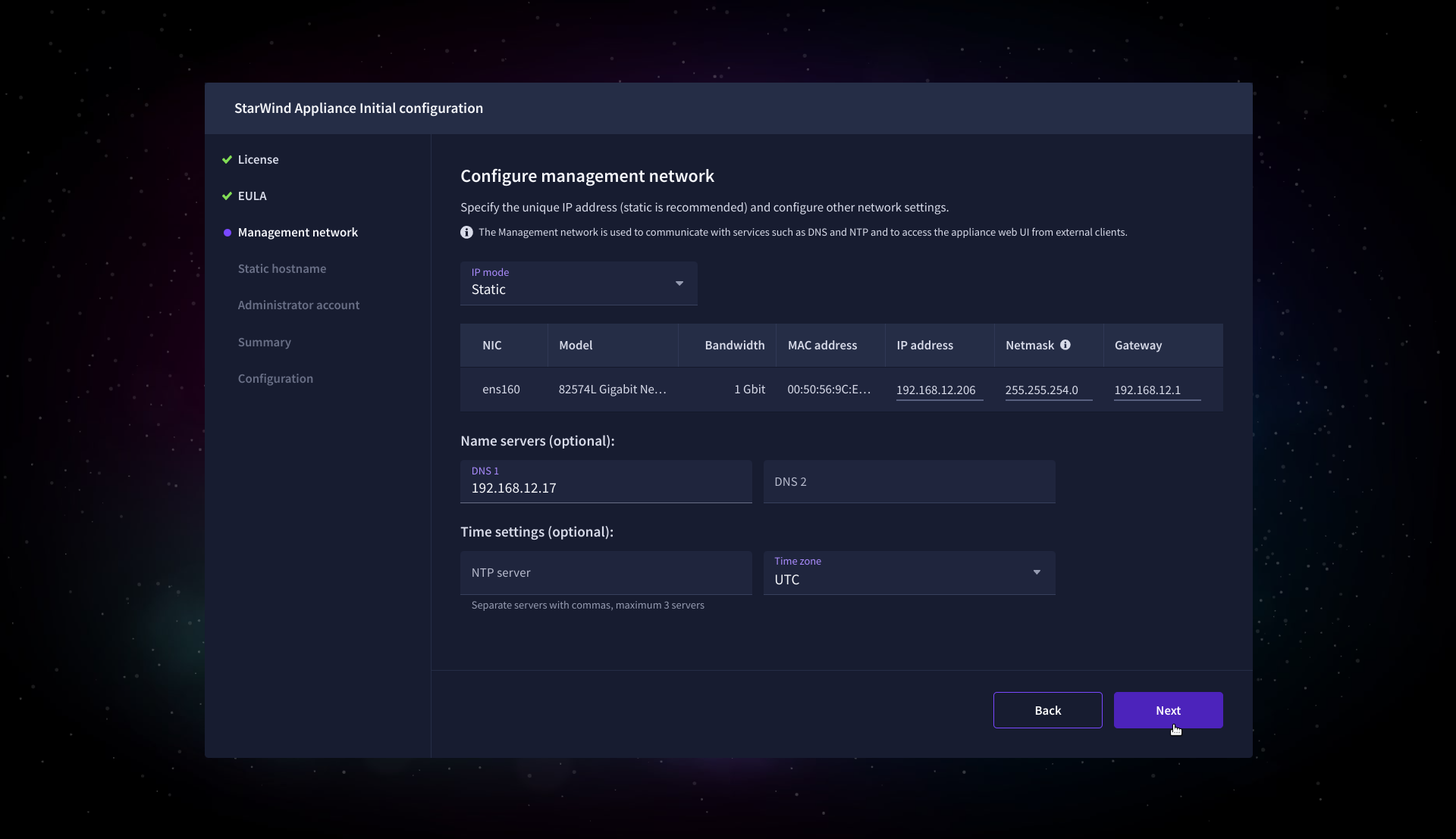

7. On the Management network step, review or edit the network settings and click Next.

IMPORTANT: The use of Static IP mode is highly recommended.

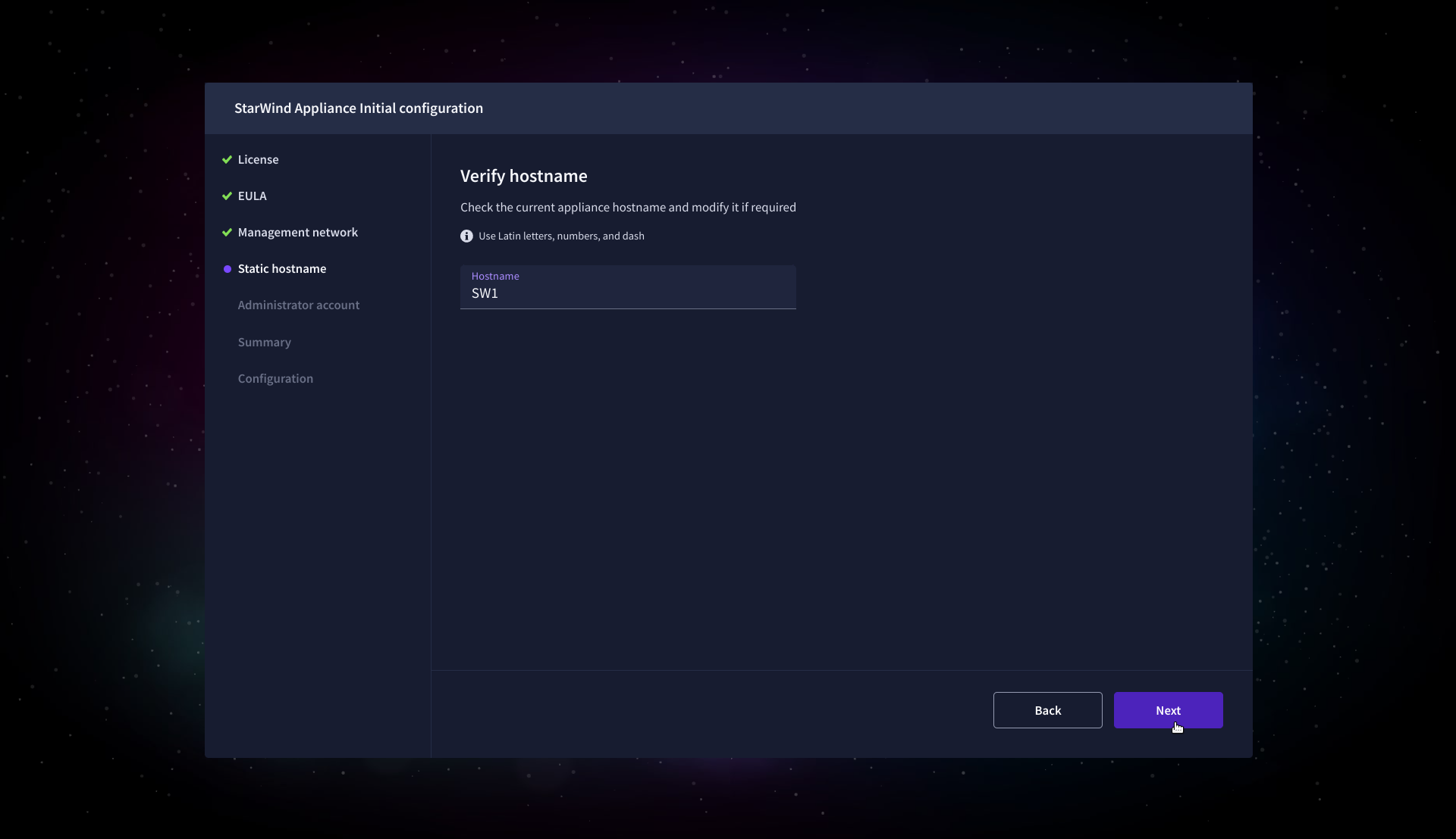

8. On the Static hostname, specify the hostname for the virtual machine and click Next.

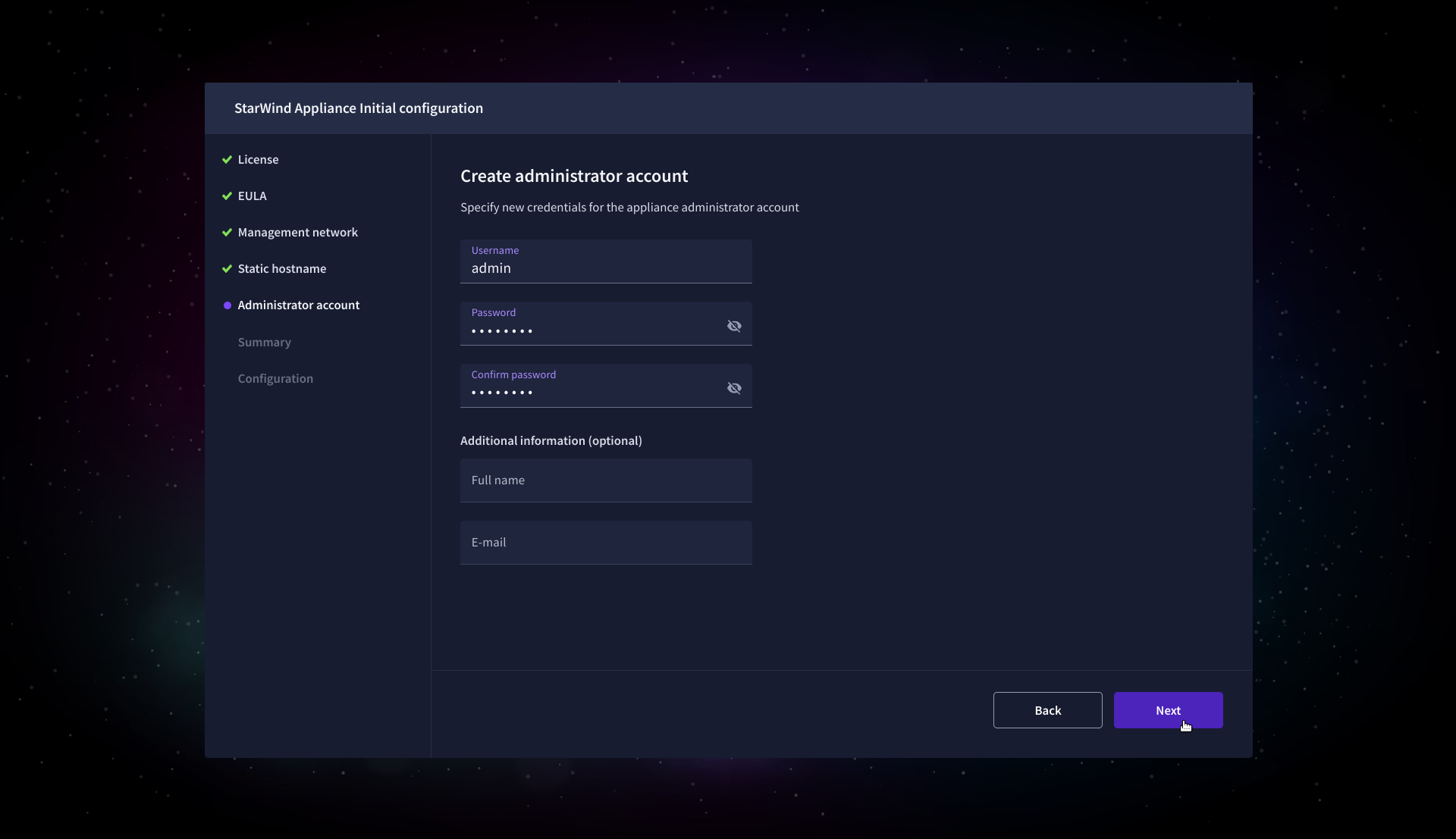

9. On the Administrator account step, specify the credentials for the new StarWind Virtual SAN administrator account and click Next.

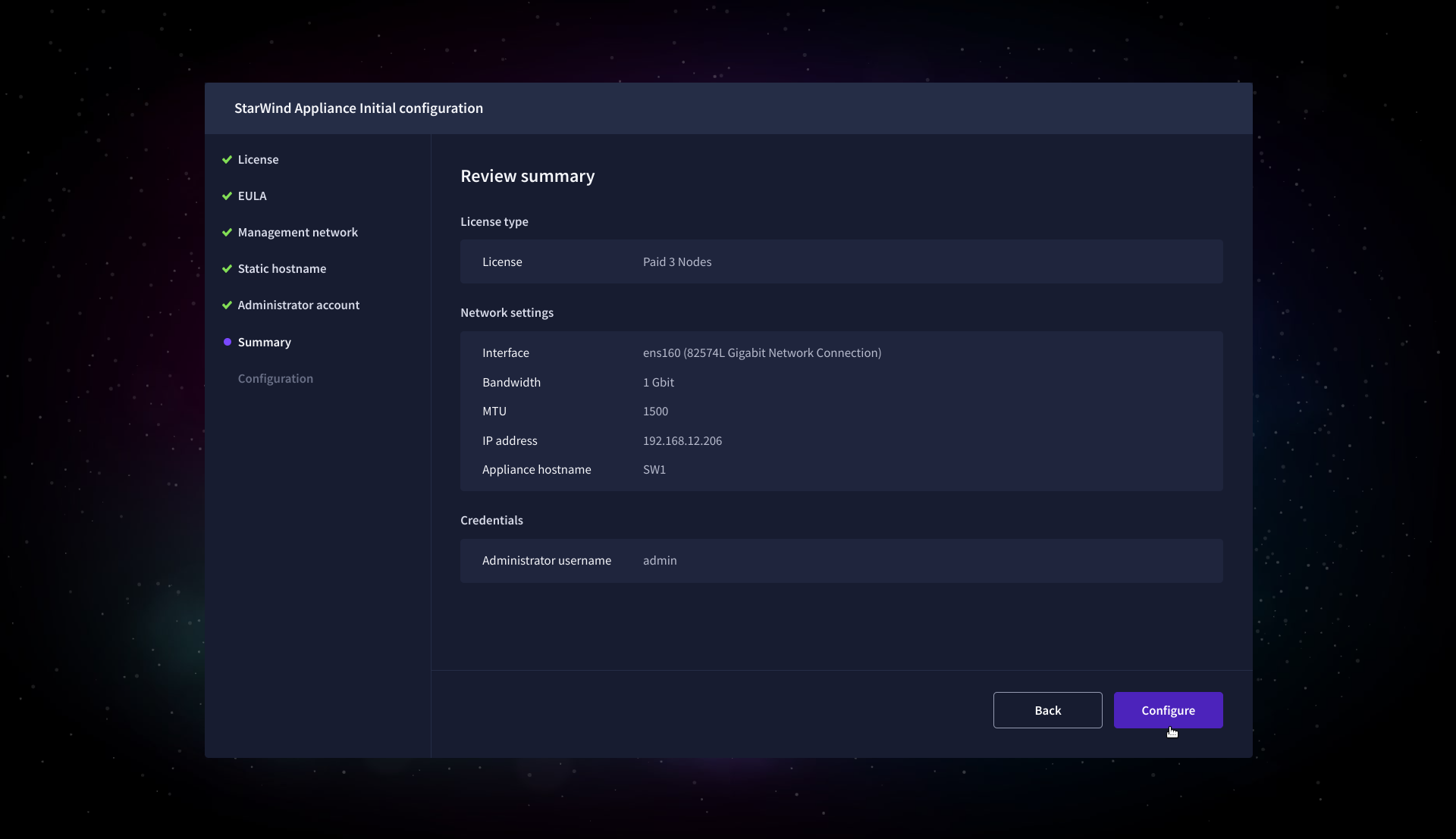

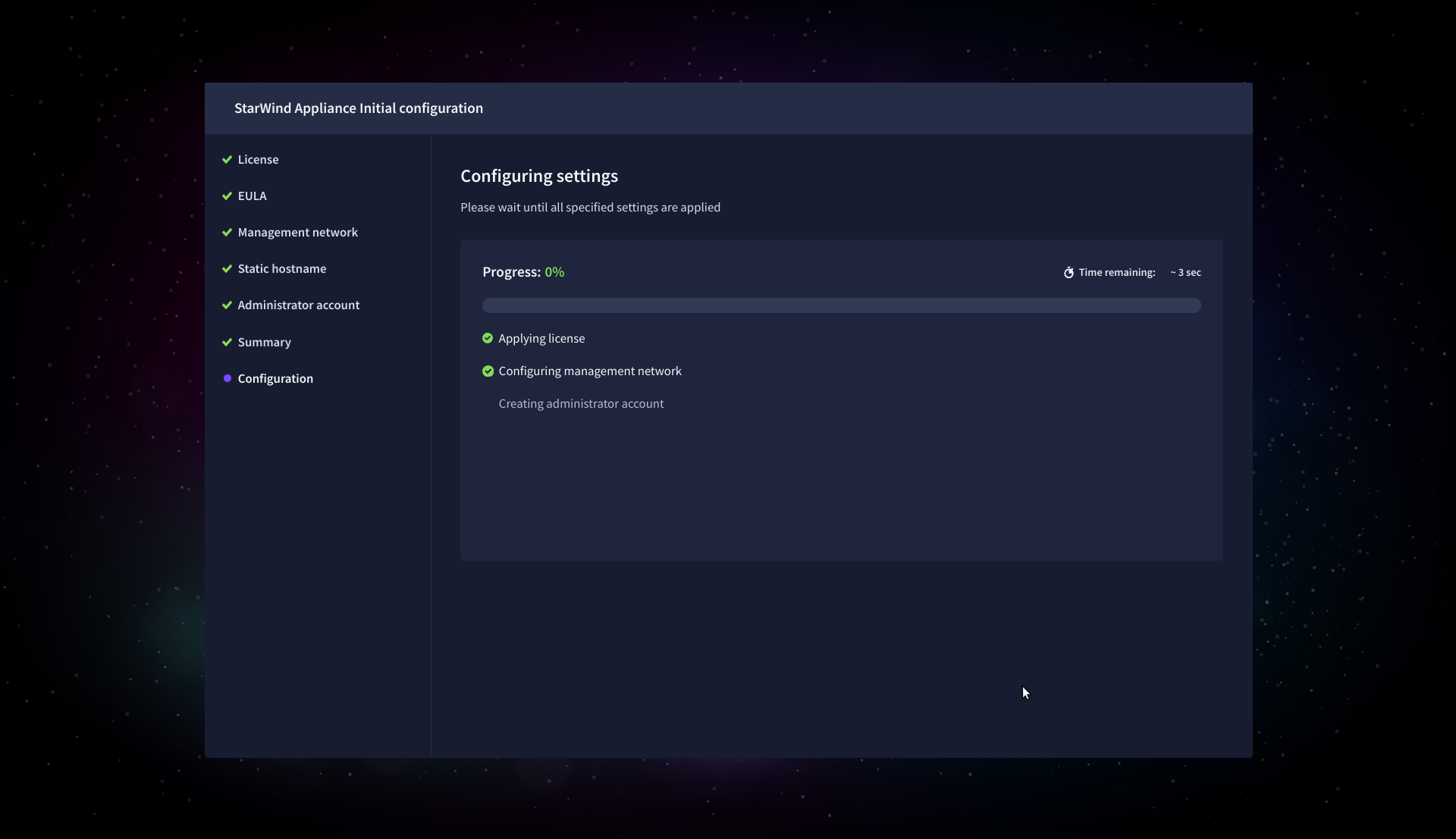

10. Wait until the Initial Configuration Wizard configures StarWind Virtual SAN for you.

11. Please standby until the Initial Configuration Wizard configures StarWind VSAN for you.

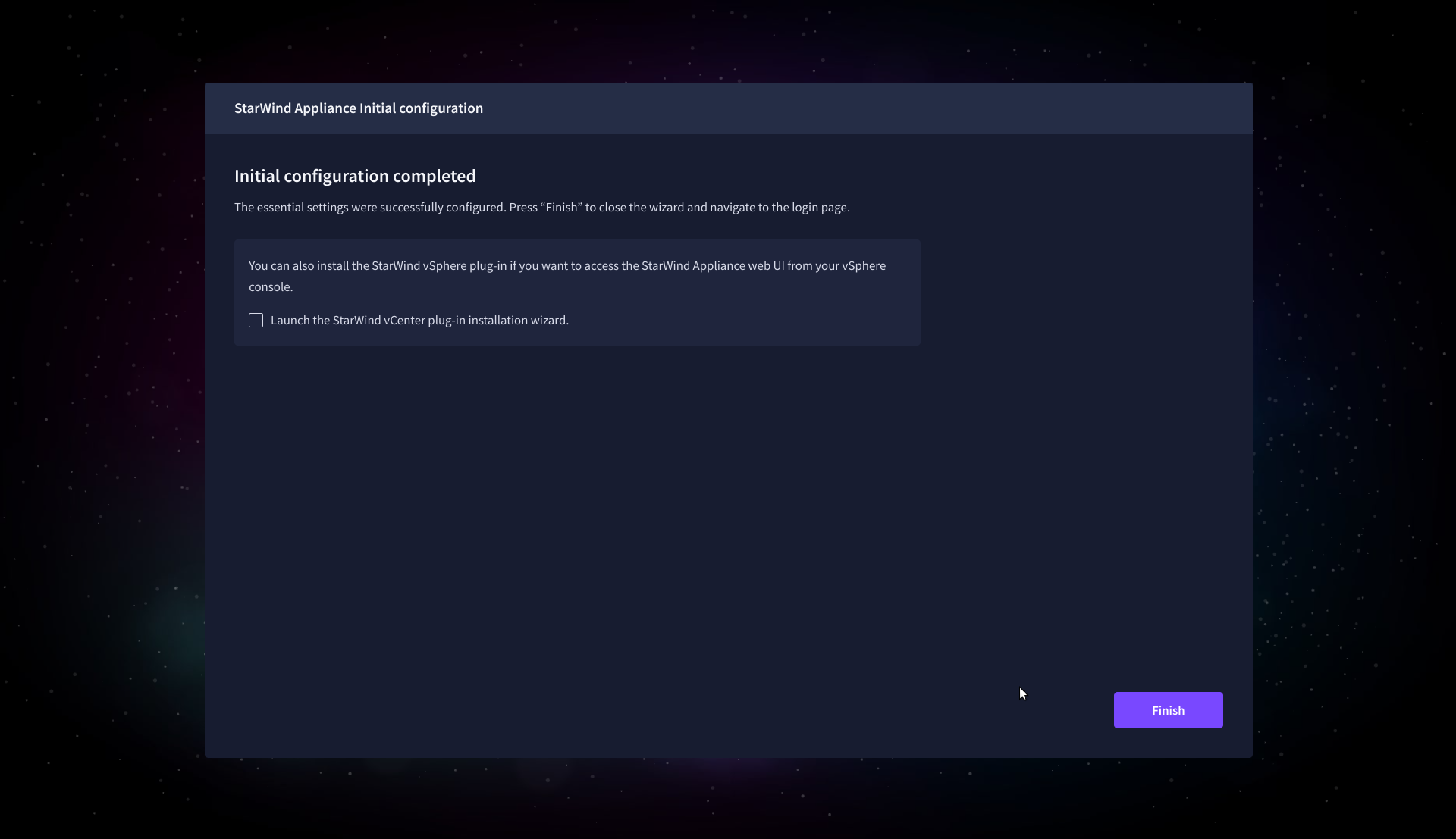

12. After the configuration process is completed, click Finish to install the StarWind vCenter Plugin immediately, or uncheck the checkbox to skip this step and proceed to the Login page.

13. Repeat steps 1 through 12 on each Windows Server host.

Add Appliance

To create replicated, highly available storage, add partner appliances that use the same StarWind Virtual SAN license key.

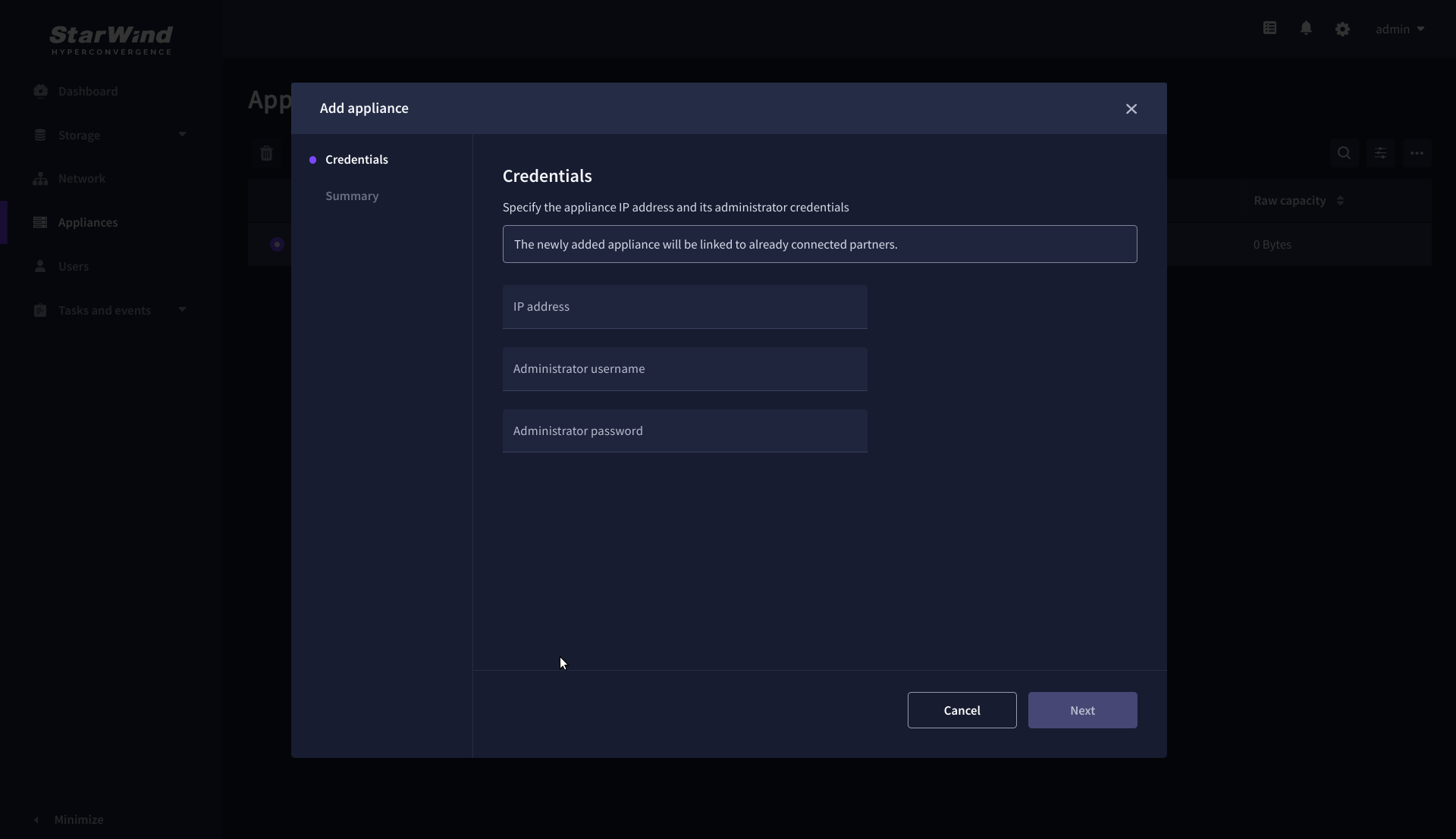

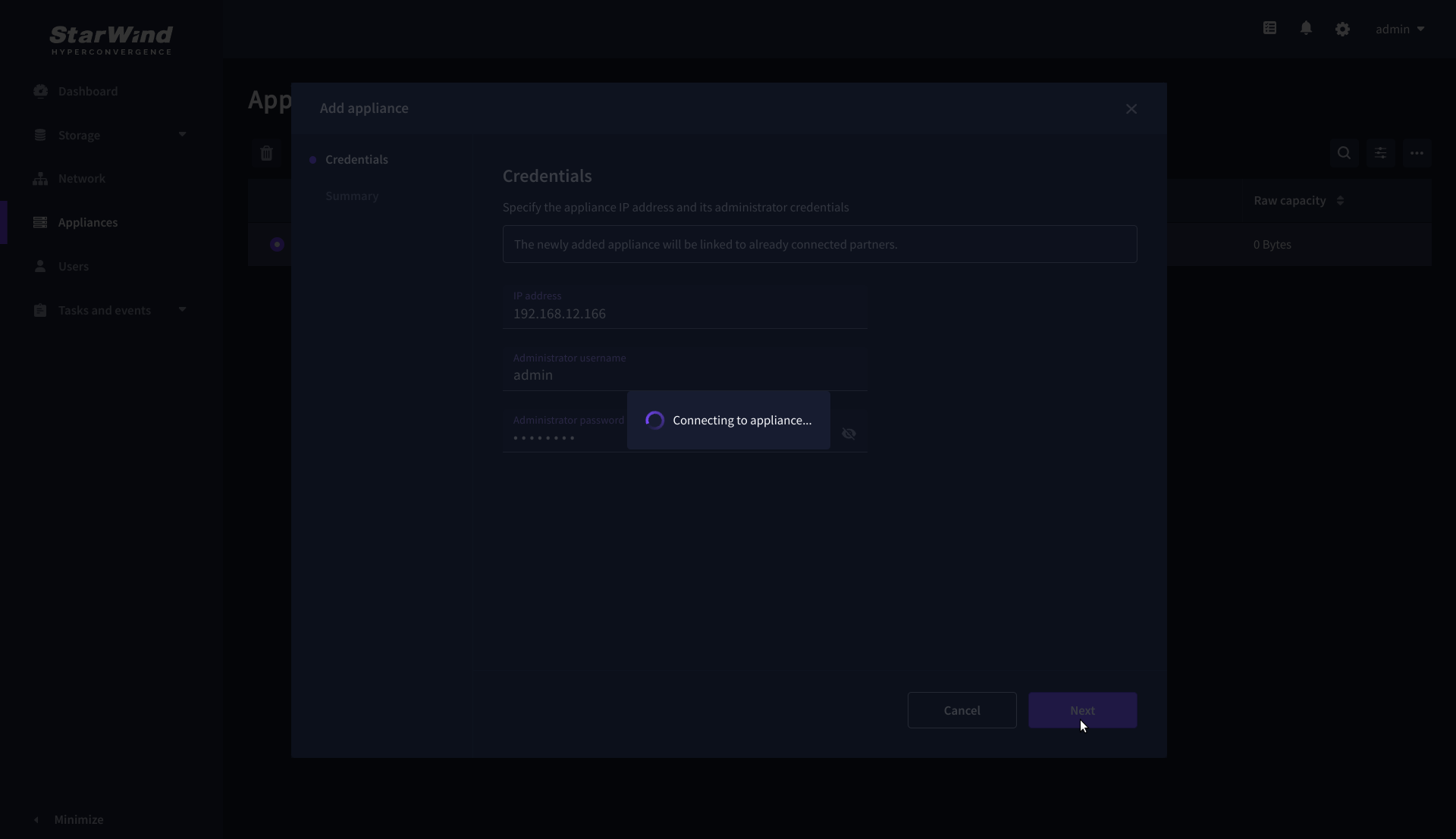

1. Navigate to the Appliances page and click Add to open the Add appliance wizard.

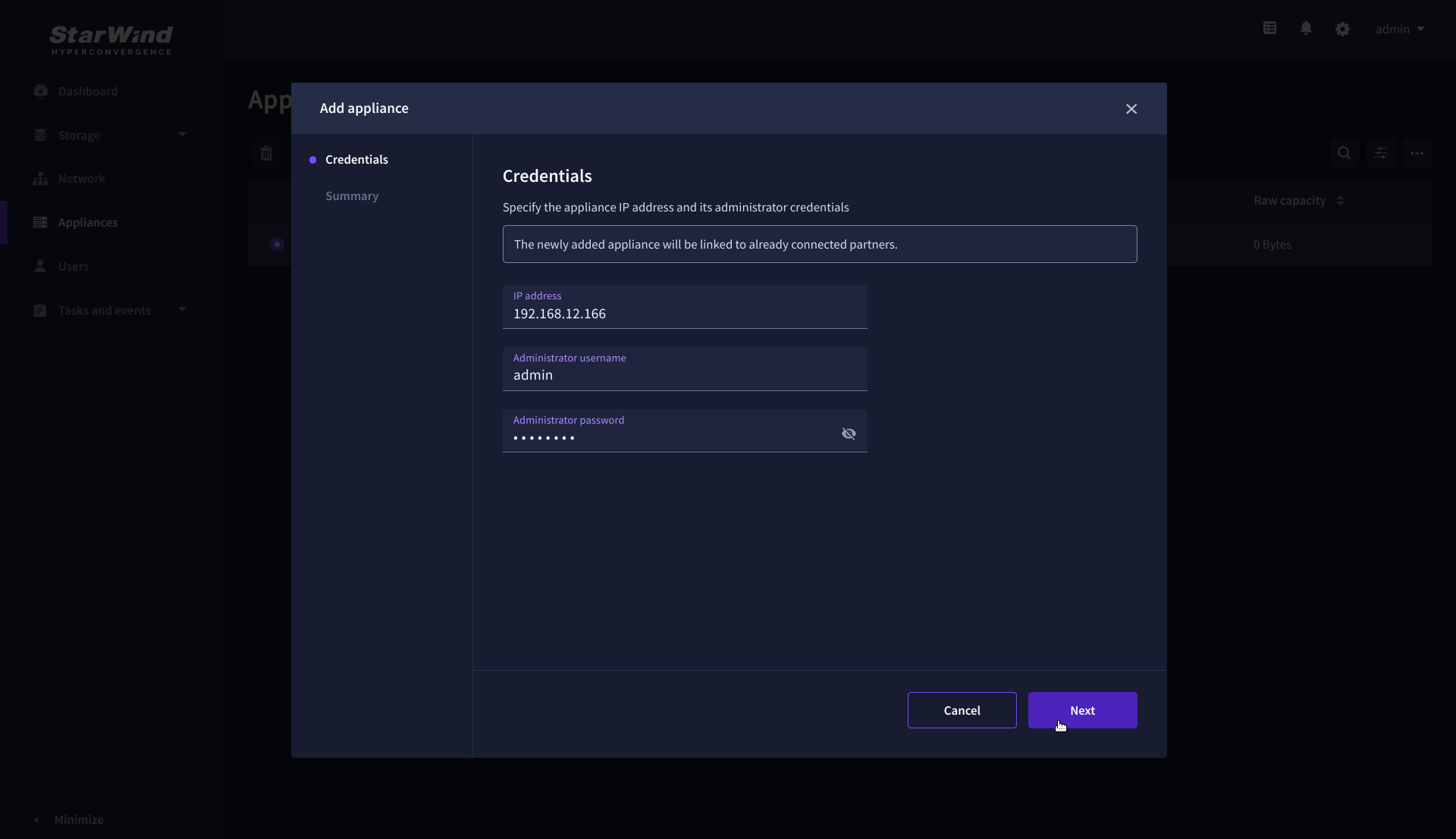

2. On the Credentials step, enter the IP address and credentials for the partner StarWind Virtual SAN appliance, then click Next.

3. Provide credentials of partner appliance.

3. Wait for the connection to be established and the settings to be validated

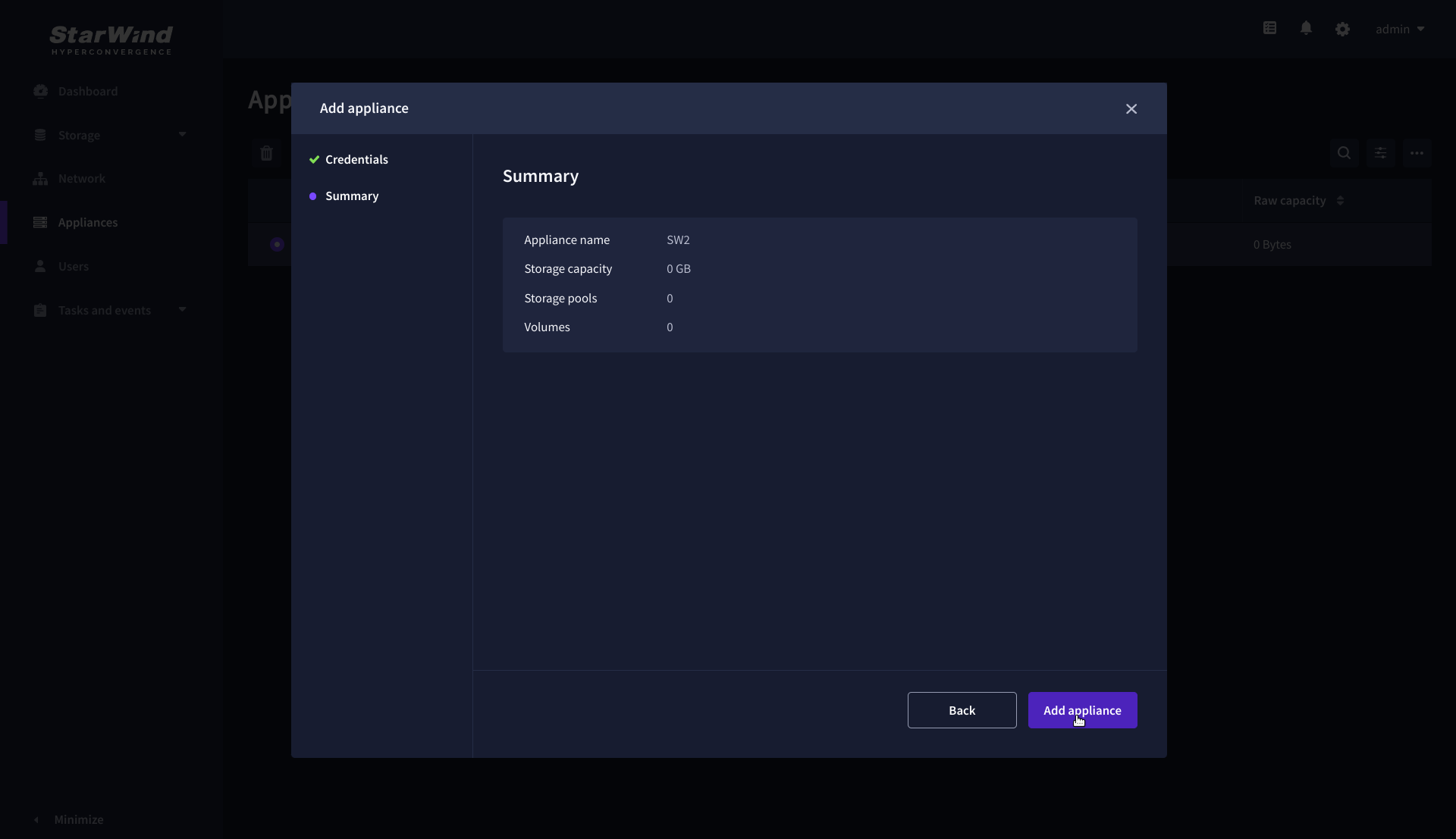

4. On the Summary step, review the properties of the partner appliance, then click Add Appliance.

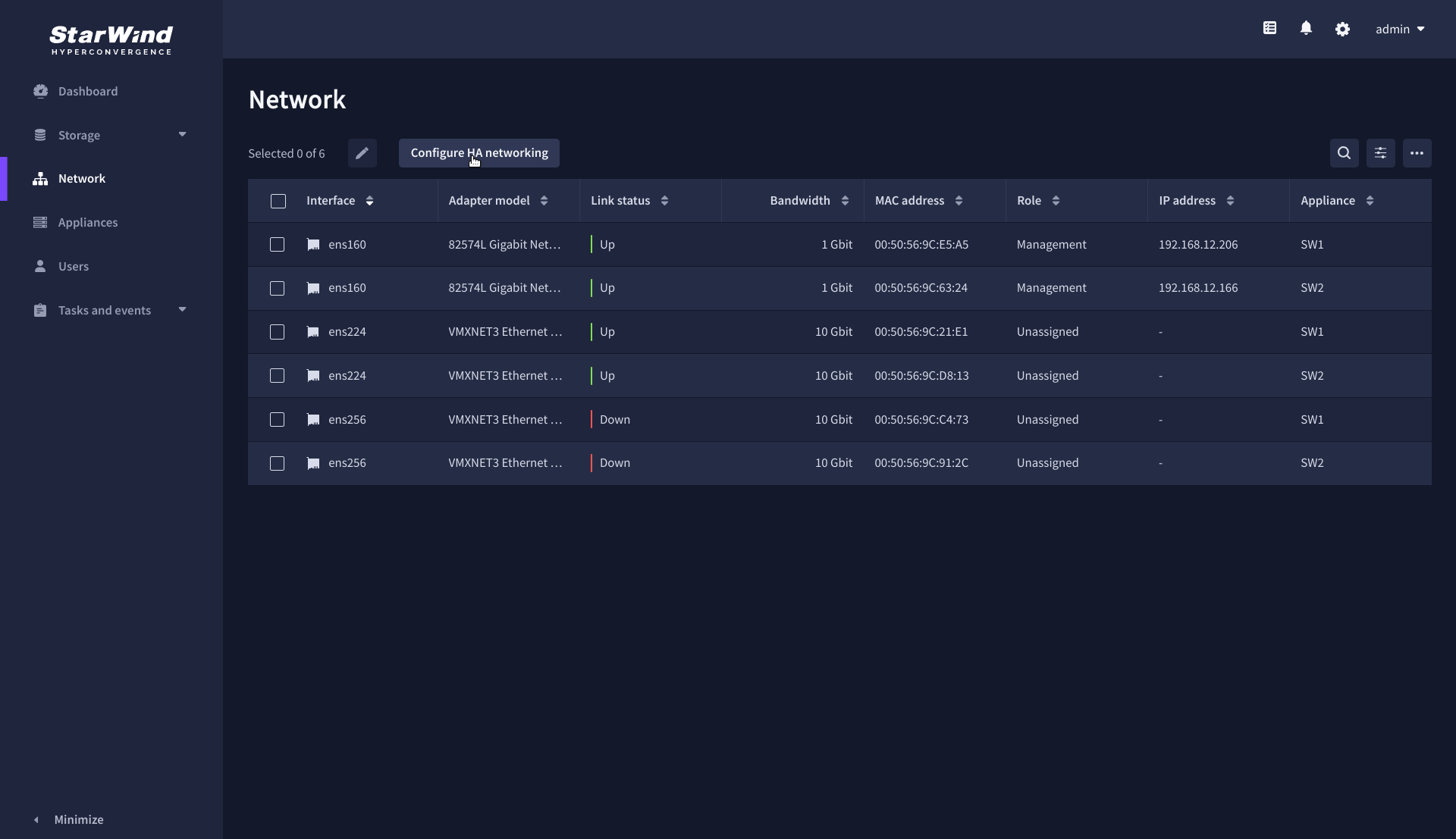

Configure HA networking

1. Navigate to the Network page and open Configure HA networking wizard.

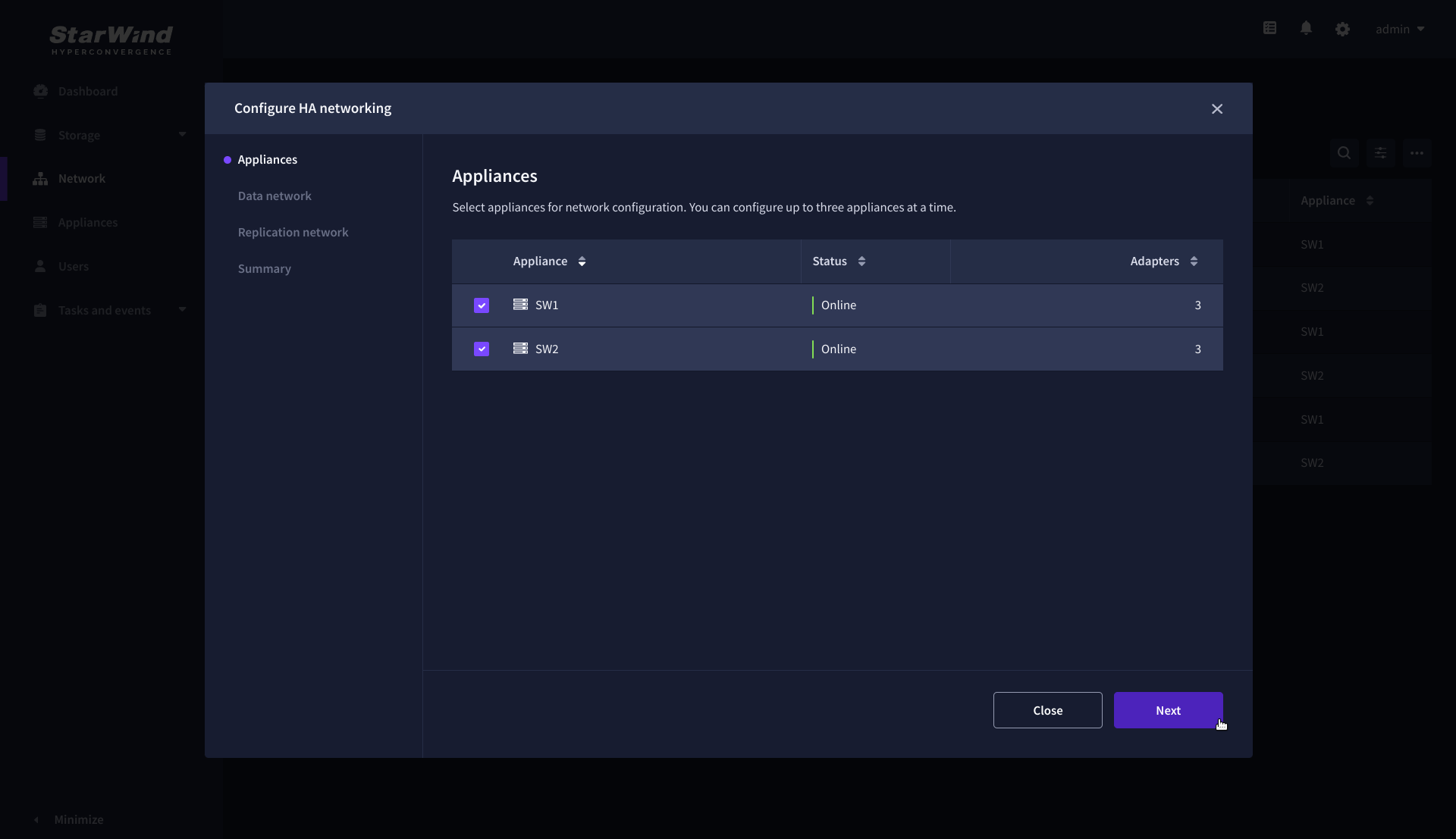

2. On the Appliances step, select either 2 partner appliances to configure two-way replication, or 3 appliances for three-way replication, then click Next.

NOTE: The number of appliances in the cluster is limited by your StarWind Virtual SAN license.

3. On the Data Network step, select the network interfaces designated to carry iSCSI or NVMe-oF storage traffic. Assign and configure at least one interface on each appliance (in our example: 172.16.10.10 and 172.16.10.20) with a static IP address in a unique network (subnet), specify the subnet mask and Cluster MTU size.

IMPORTANT: For a redundant, high-availability configuration, configure at least 2 network interfaces on each appliance. Ensure that the Data Network interfaces are interconnected between appliances through multiple direct links or via redundant switches.

4. Assign MTU value on all selected network adapters, e.g. 1500 or 9000 bytes. If you are using network switches with the selected Data Network adapters, ensure that they are configured with the same MTU size value. In case of MTU settings mismatch, stability and performance issues might occur on the whole setup.

NOTE: Setting MTU to 9000 bytes on some physical adapters (like Intel Ethernet Network Adapter X710, Broadcom network adapters, etc.) might cause stability and performance issues depending on the installed network driver. To avoid them, use 1500 bytes MTU size or install the stable version of the driver.

5. Once configured, click Next to validate network settings.

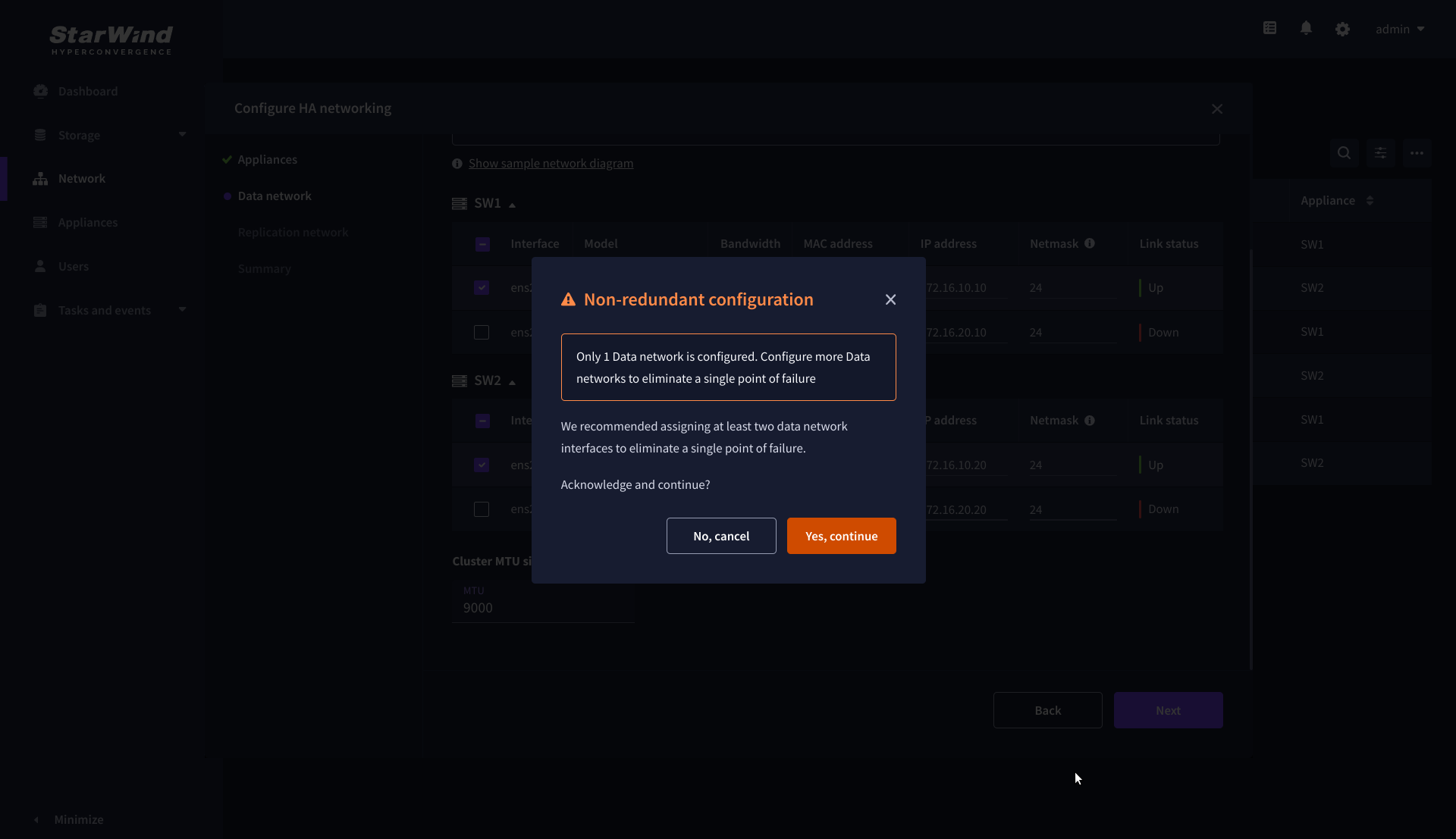

6. The warning might appear if a single data interface is configured. Click Yes, continue to proceed with the configuration.

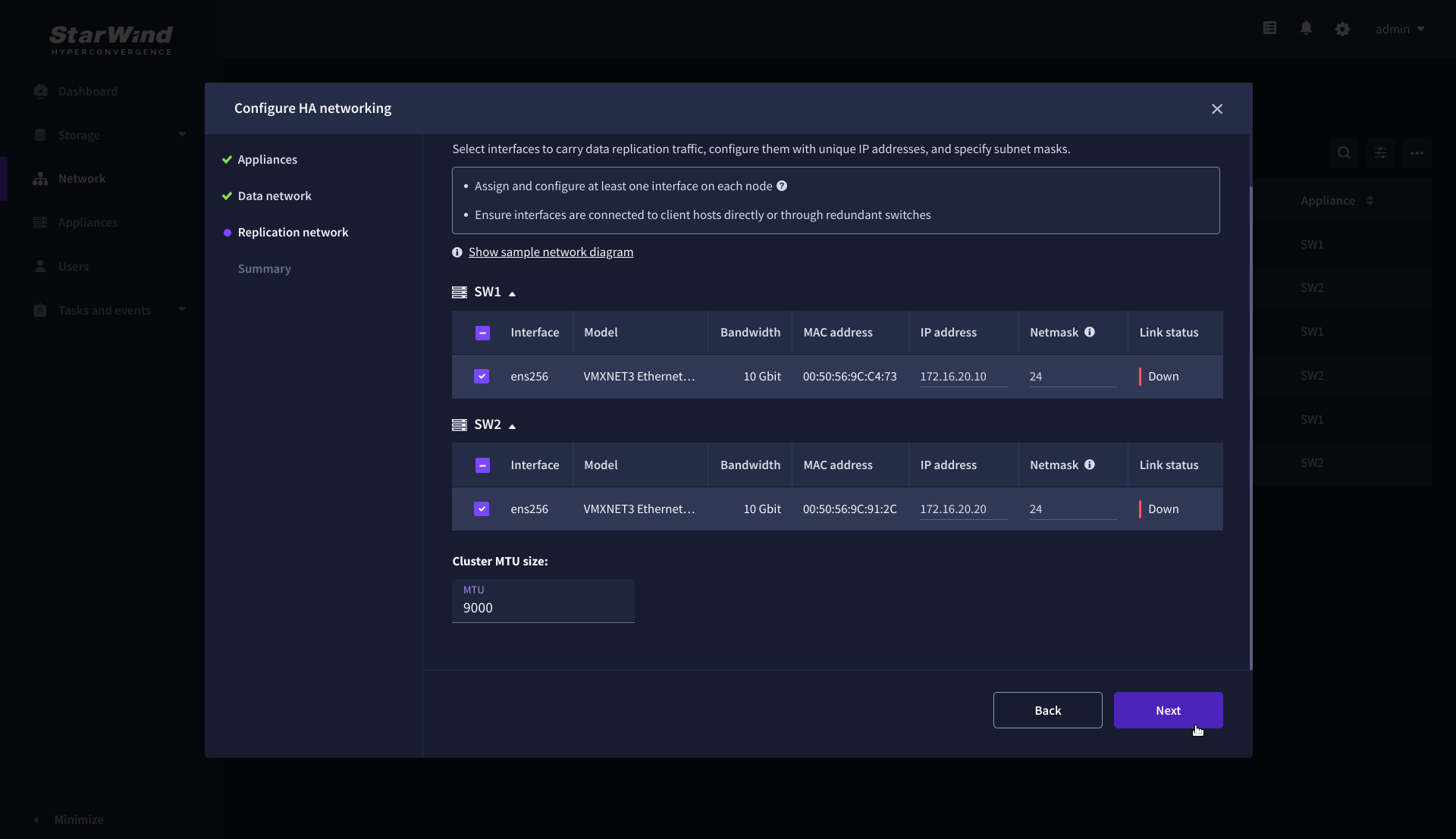

7. On the Replication Network step, select the network interfaces designated to carry the traffic for synchronous replication. Assign and configure at least one interface on each appliance with a static IP address in a unique network (subnet), specify the subnet mask and Cluster MTU size.

IMPORTANT: For a redundant, high-availability configuration, configure at least 2 network interfaces on each appliance. Ensure that the Replication Network interfaces are interconnected between appliances through multiple direct links or via redundant switches.

8. Assign MTU value on all selected network adapters, e.g. 1500 or 9000 bytes. If you are using network switches with the selected Replication Network adapters, ensure that they are configured with the same MTU size value. In case of MTU settings mismatch, stability and performance issues might occur on the whole setup.

NOTE: Setting MTU to 9000 bytes on some physical adapters (like Intel Ethernet Network Adapter X710, Broadcom network adapters, etc.) might cause stability and performance issues depending on the installed network driver. To avoid them, use 1500 bytes MTU size or install the stable version of the driver.

9. Once configured, click Next to validate network settings.

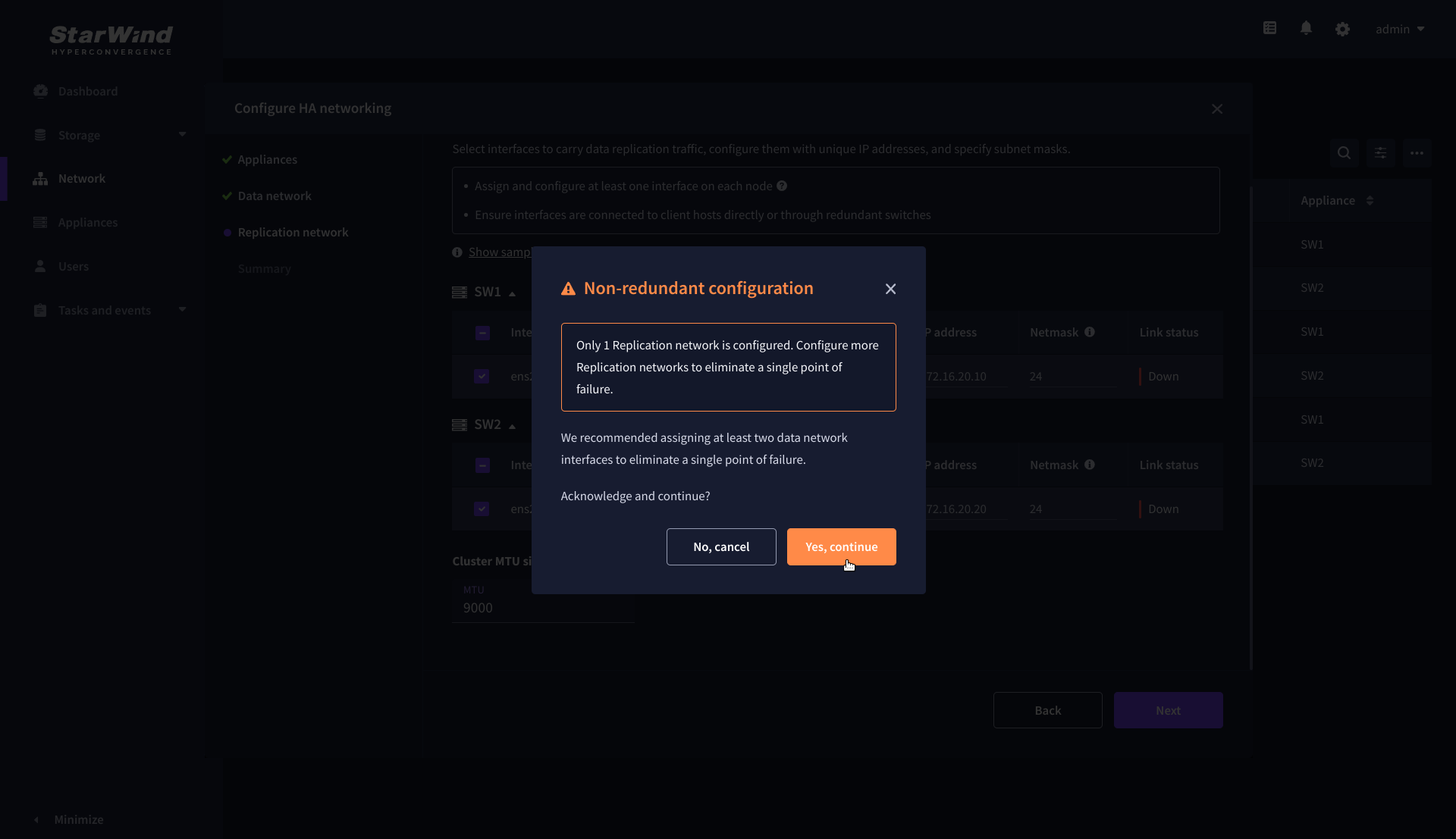

10. If only one Replication Network interface is configured on each partner appliance, a warning message will pop up. Click Yes, continue to acknowledge the warning and proceed.

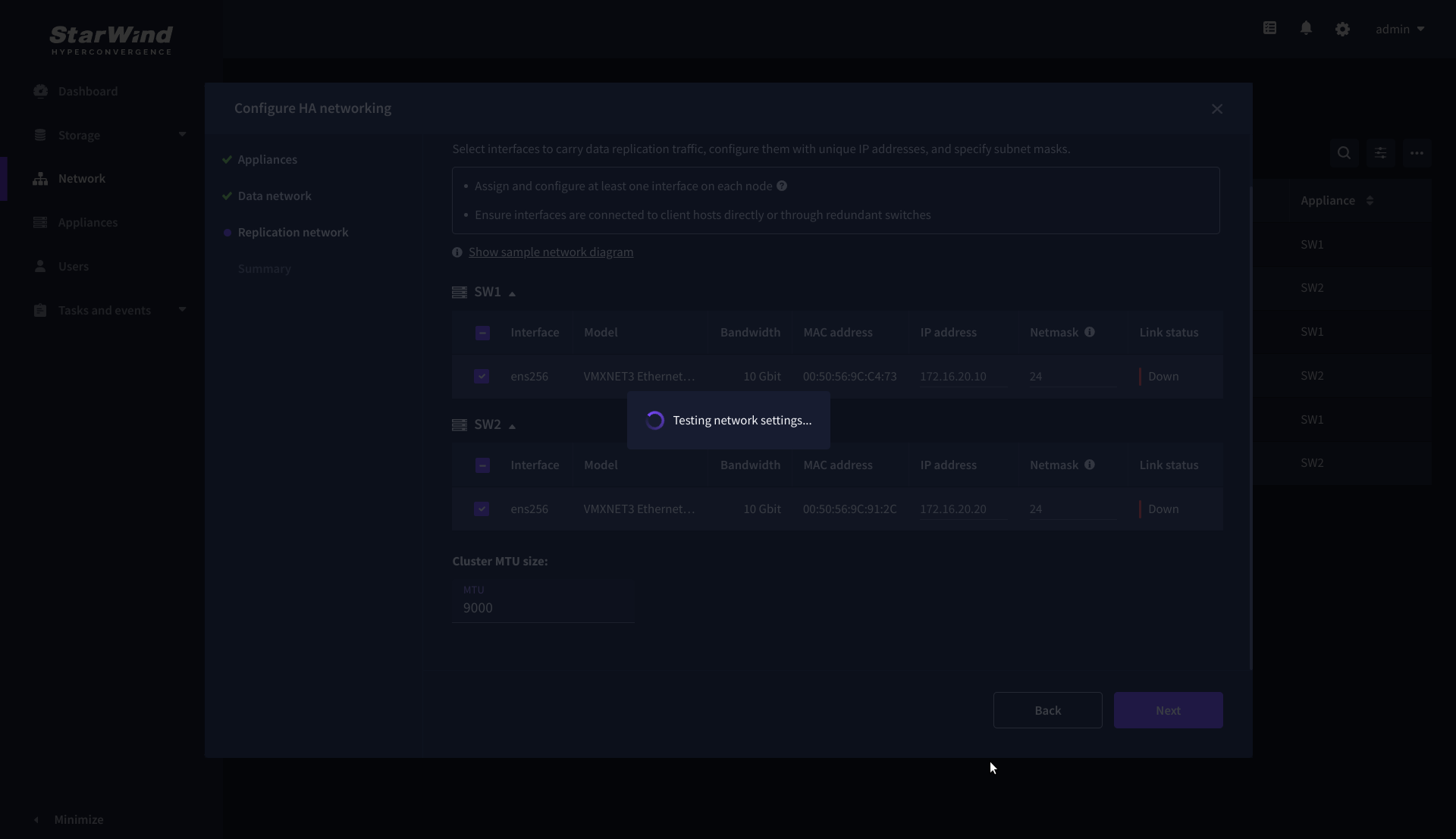

11. Wait for the configuration completion.

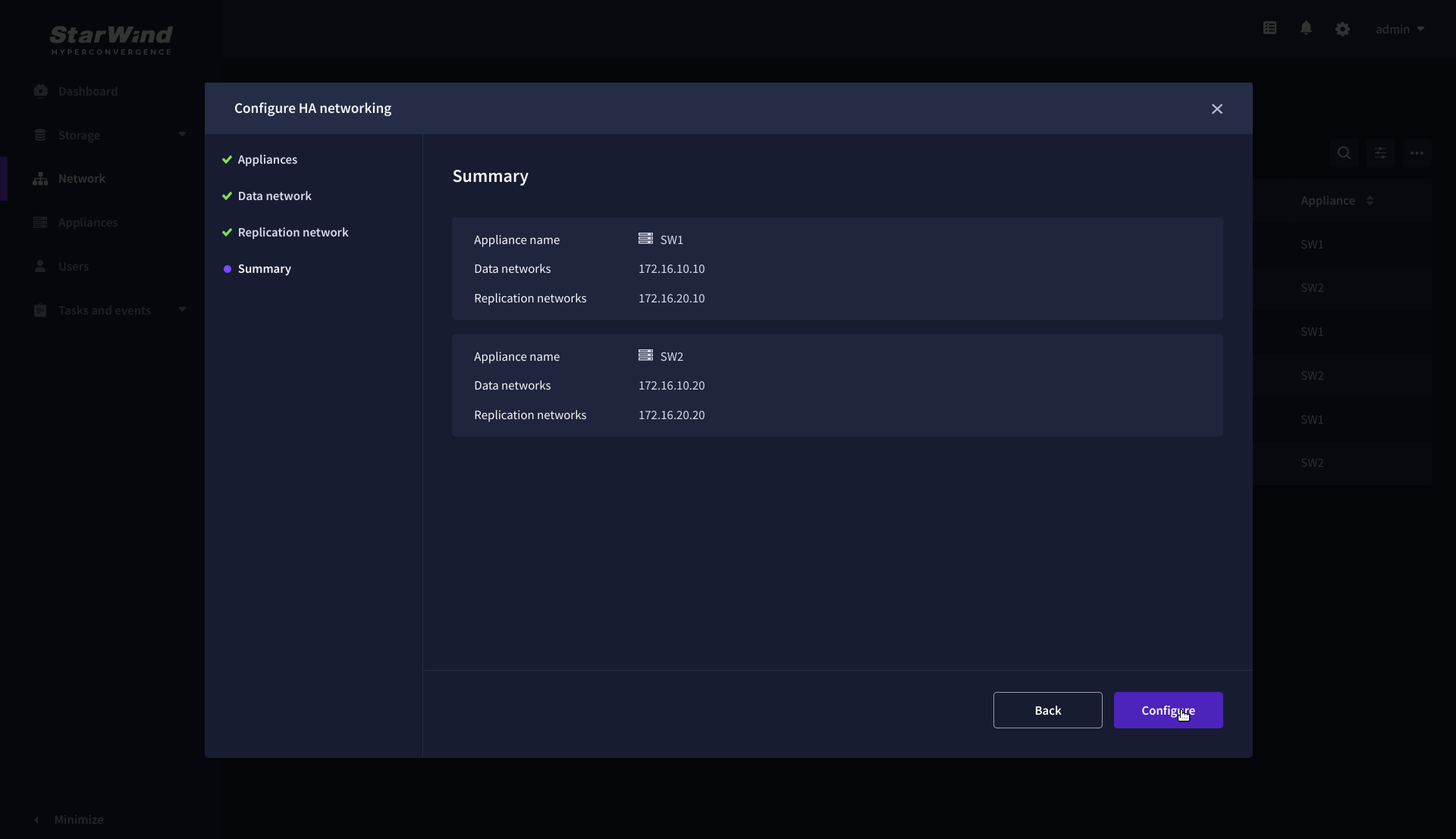

12. On the Summary step, review the specified network settings and click Configure to apply the changes.

Add physical disks

Attach physical storage to StarWind Virtual SAN Controller VM:

- Ensure that all physical drives are connected through an HBA or RAID controller.

- To get the optimal storage performance, add HBA, RAID controllers, or NVMe SSD drives to StarWind CVM via a passthrough device.

For detailed instructions, refer to Microsoft’s documentation on DDA. Also, find the storage provisioning guidelines in the KB article.

Create Storage Pool

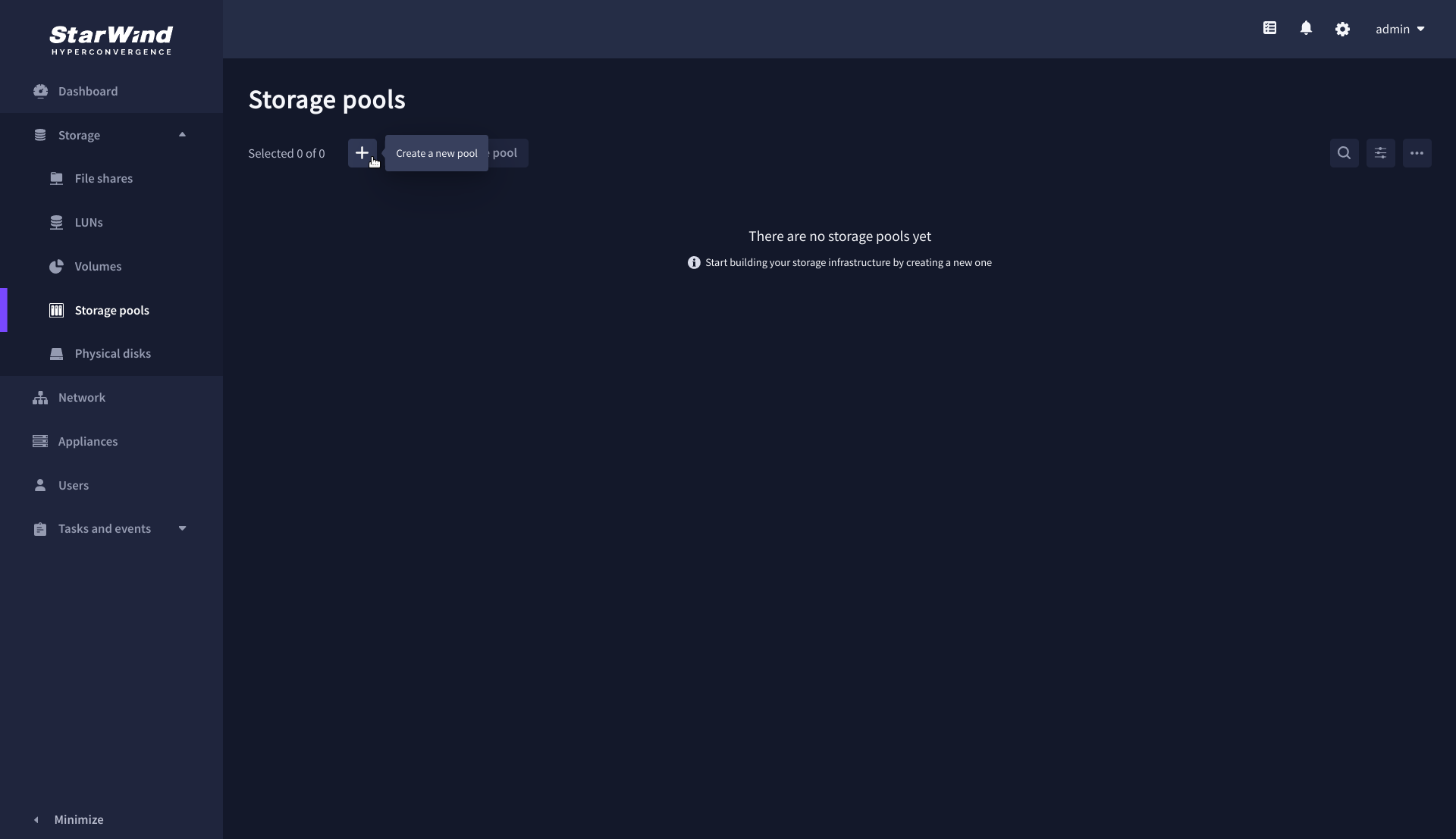

1. Navigate to the Storage pools page and click the + button to open the Create storage pool wizard .

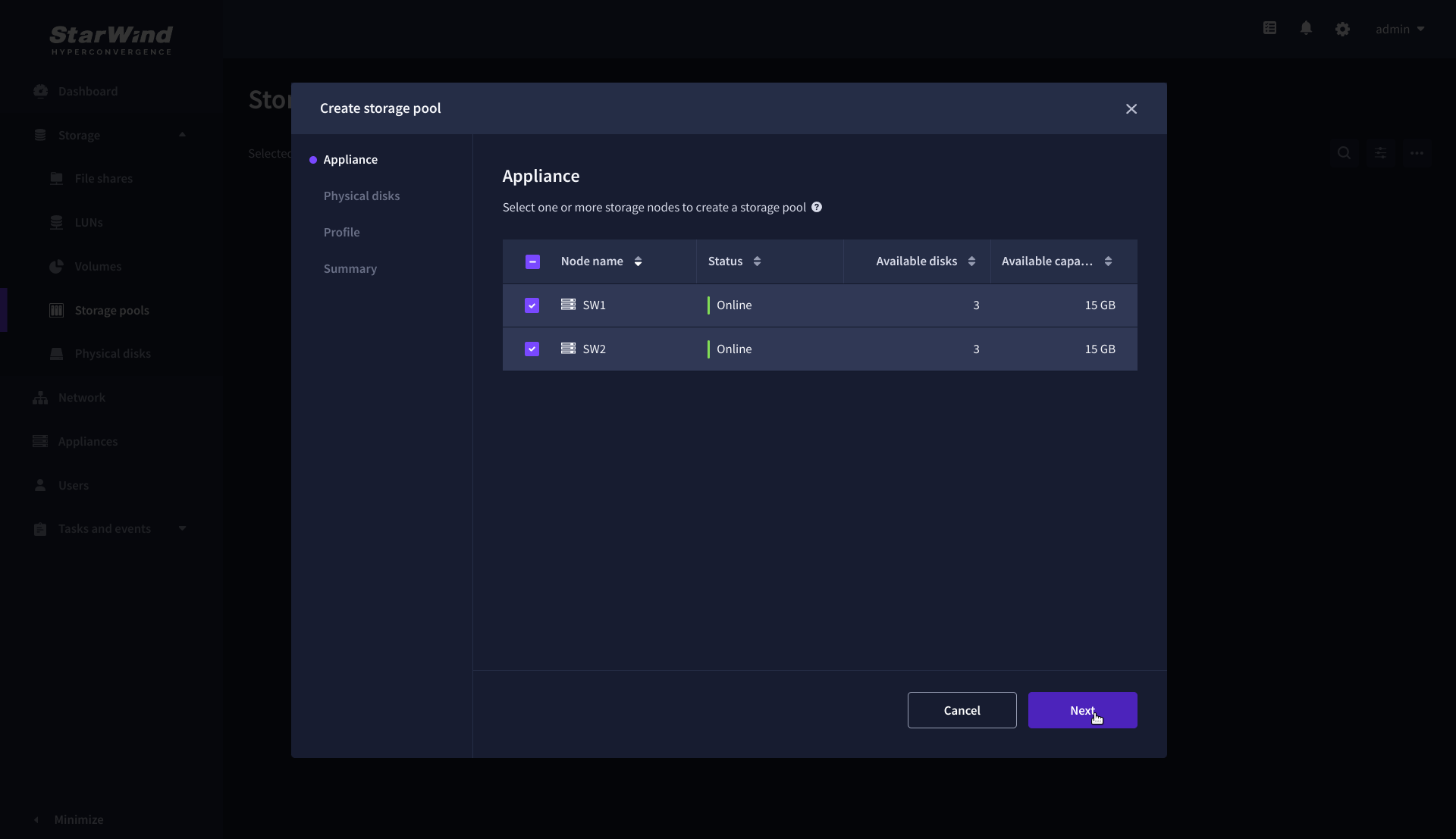

2. On the Appliance step, select partner appliances on which to create new storage pools, then click Next.

NOTE: Select 2 appliances for configuring storage pools if you are deploying a two-node cluster with two-way replication, or select 3 appliances for configuring a three-node cluster with a three-way mirror.

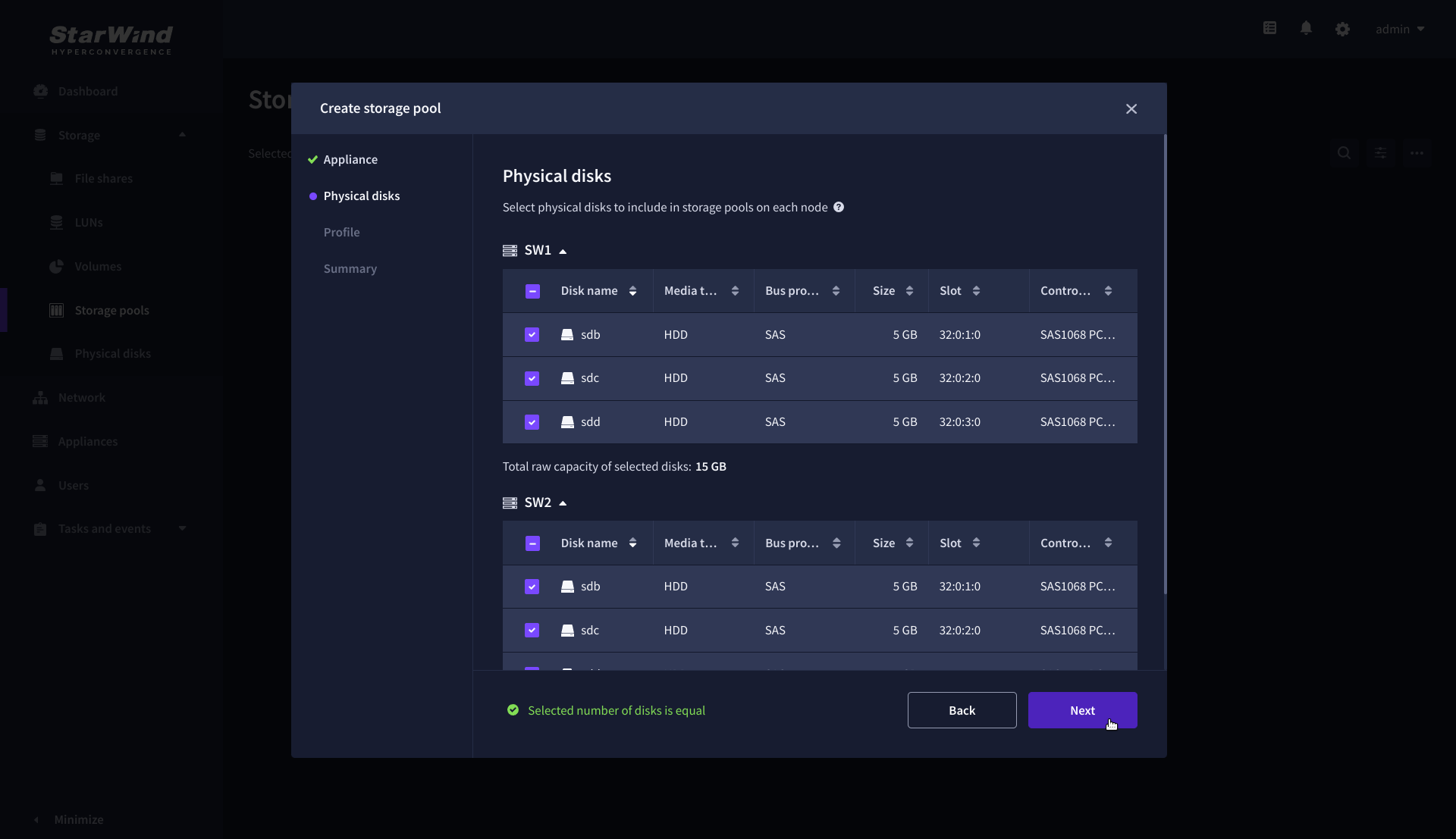

3. On the Physical disks step, select physical disks to be pooled on each node, then click Next.

IMPORTANT: Select an identical type and number of disks on each appliance to create storage pools with a uniform configuration.

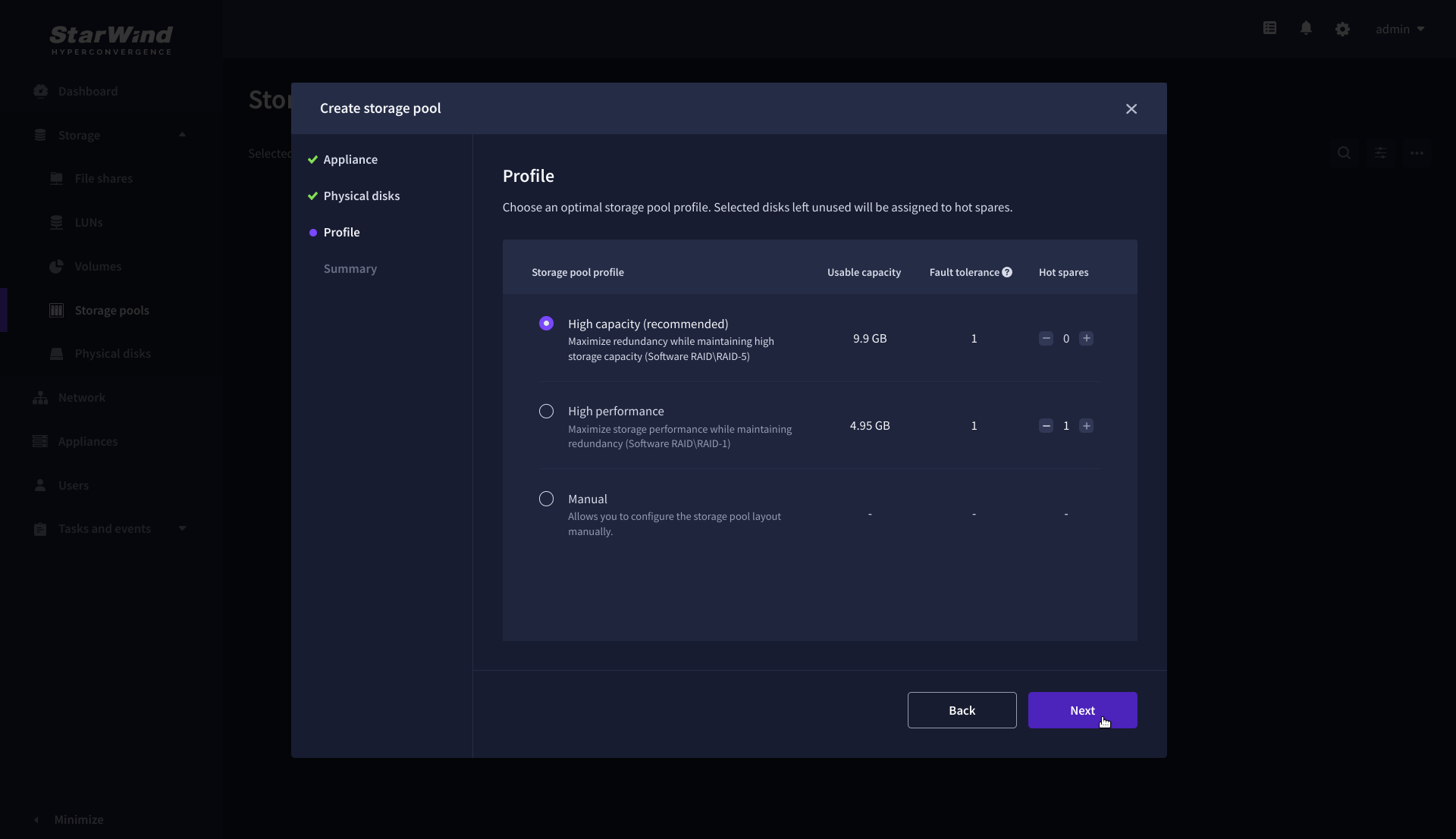

4. On the Profile step, select one of the preconfigured storage profiles, or choose Manual to configure the storage pool manually based on your redundancy, capacity, and performance requirements, then click Next.

NOTE: Hardware RAID, Linux Software RAID, and ZFS storage pools are supported. To simplify the configuration of storage pools, preconfigured storage profiles are provided. These profiles recommend a pool type and layout based on the attached storage:

- High capacity – creates Linux Software RAID-5 to maximize storage capacity while maintaining redundancy.

- High performance – creates Linux Software RAID-10 to maximize storage performance while maintaining redundancy.

- Hardware RAID – configures a hardware RAID virtual disk as a storage pool. This option is available only if a hardware RAID controller is passed through to the StarWind Virtual SAN.

- Better redundancy – creates ZFS Striped RAID-Z2 (RAID 60) to maximize redundancy while maintaining high storage capacity.

- Manual – allows users to configure any storage pool type and layout with the attached storage.

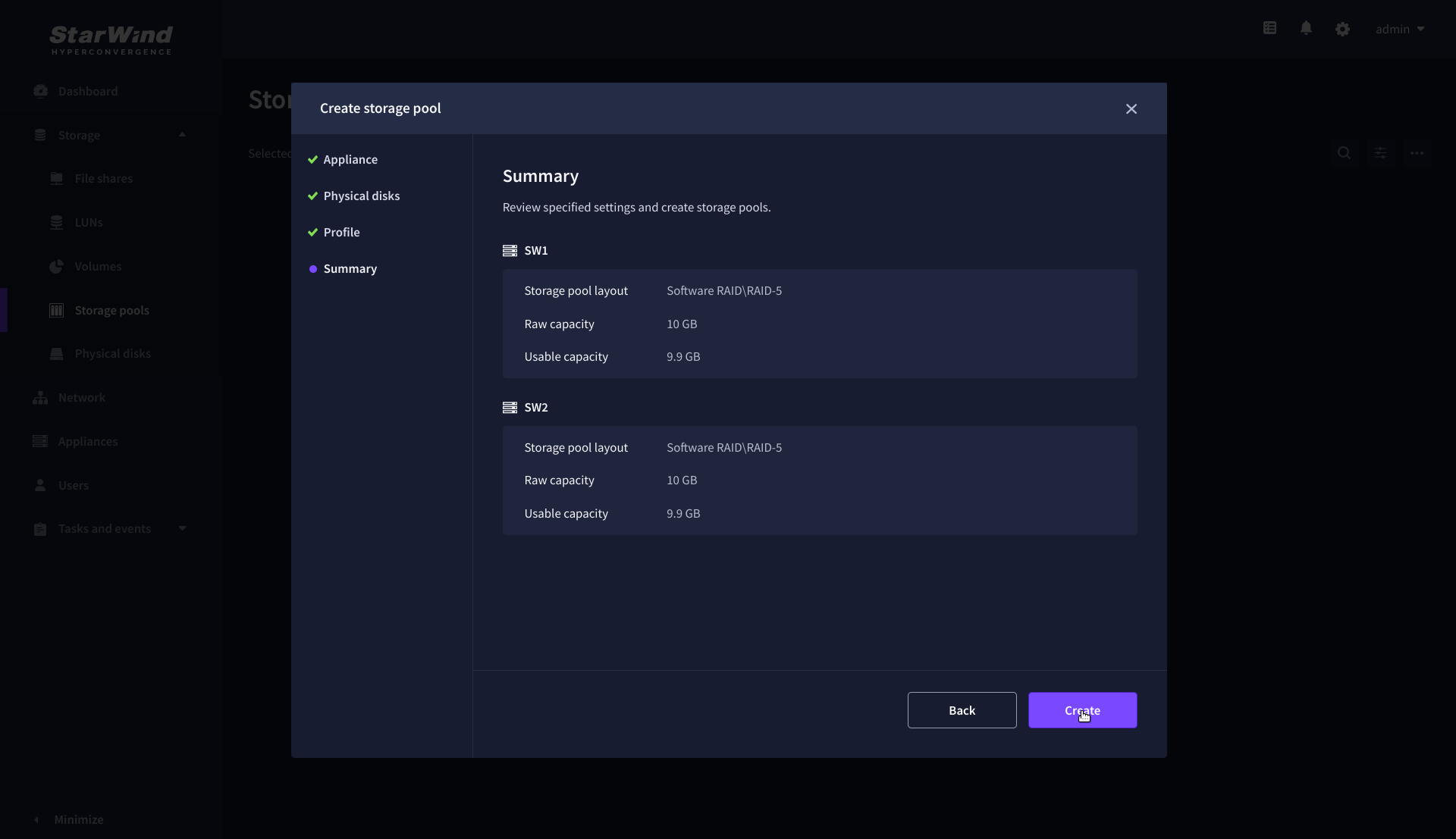

5. On the Summary step, review the storage pool settings and click Create to configure new storage pools on the selected appliances.

NOTE: The storage pool configuration may take some time, depending on the type of pooled storage and the total storage capacity. Once the pools are created, a notification will appear in the upper right corner of the Web UI.

IMPORTANT: In some cases, additional tweaks are required to optimize the storage performance of the disks added to the Controller Virtual Machine. Please follow the steps in this KB to change the scheduler type depending on the disks type: https://knowledgebase.starwindsoftware.com/guidance/starwind-vsan-for-vsphere-changing-linux-i-o-scheduler-to-optimize-storage-performance/

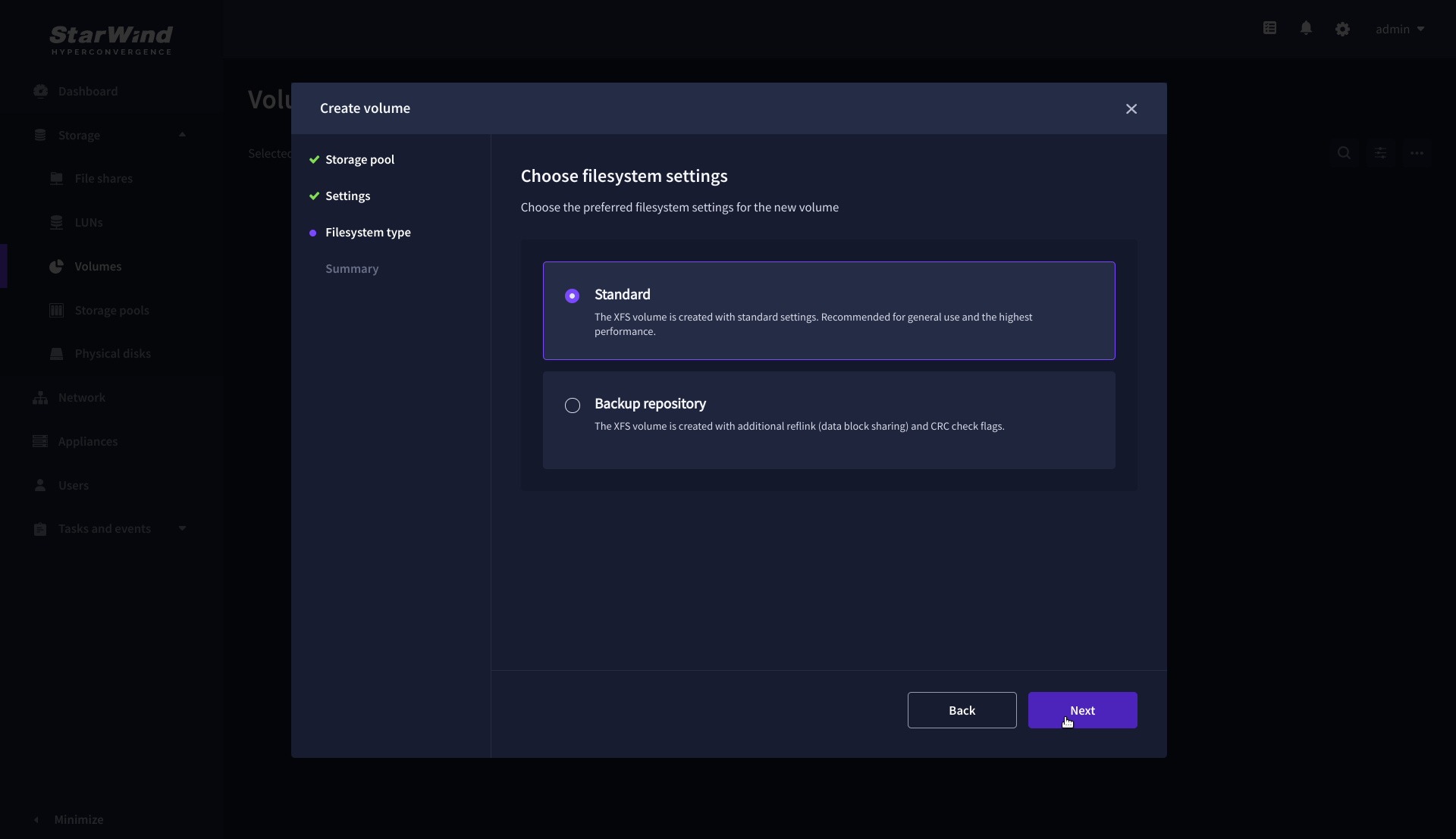

Create Volume

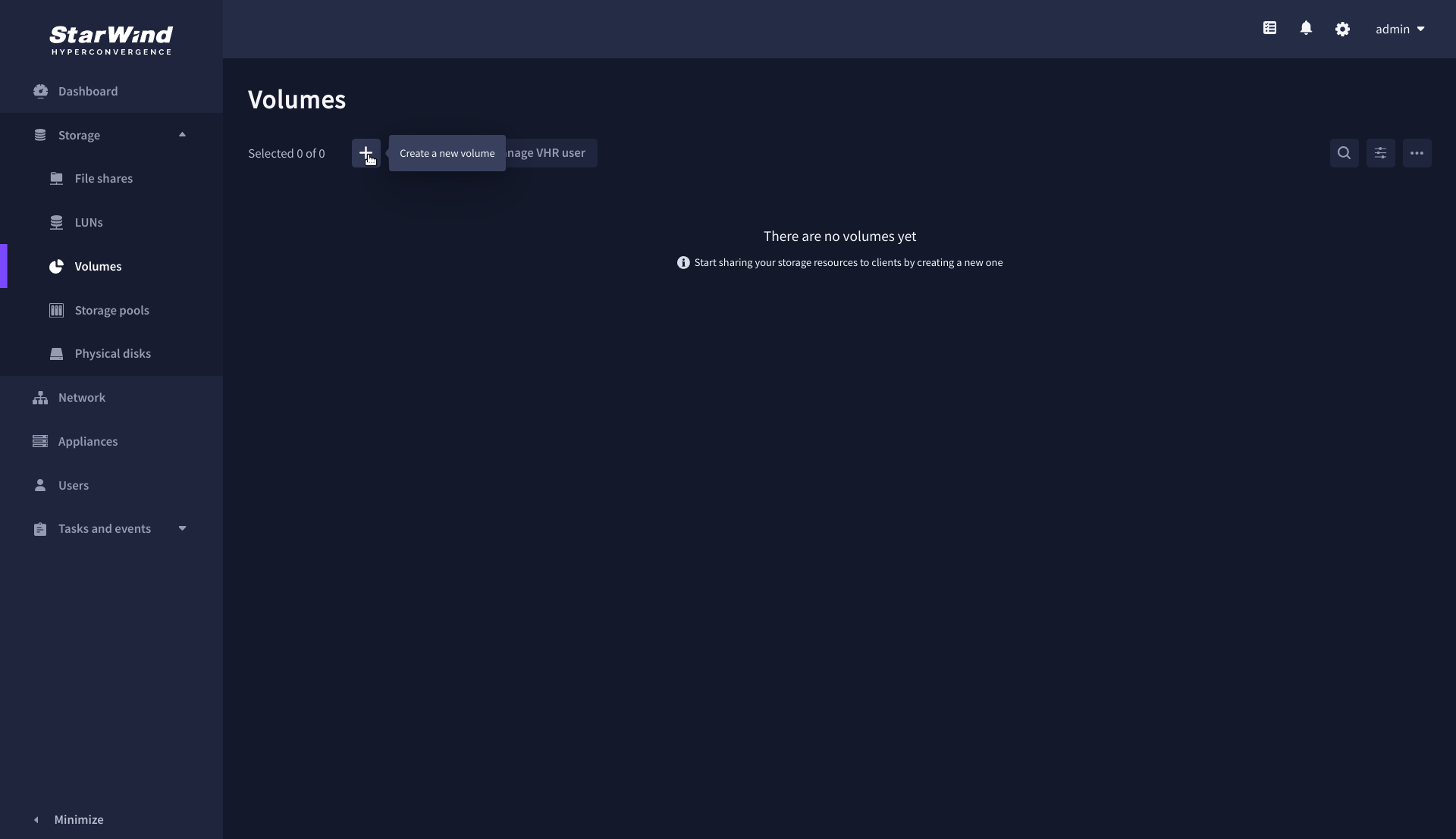

1. Navigate to the Volumes page and click the + button to open the Create volume wizard.

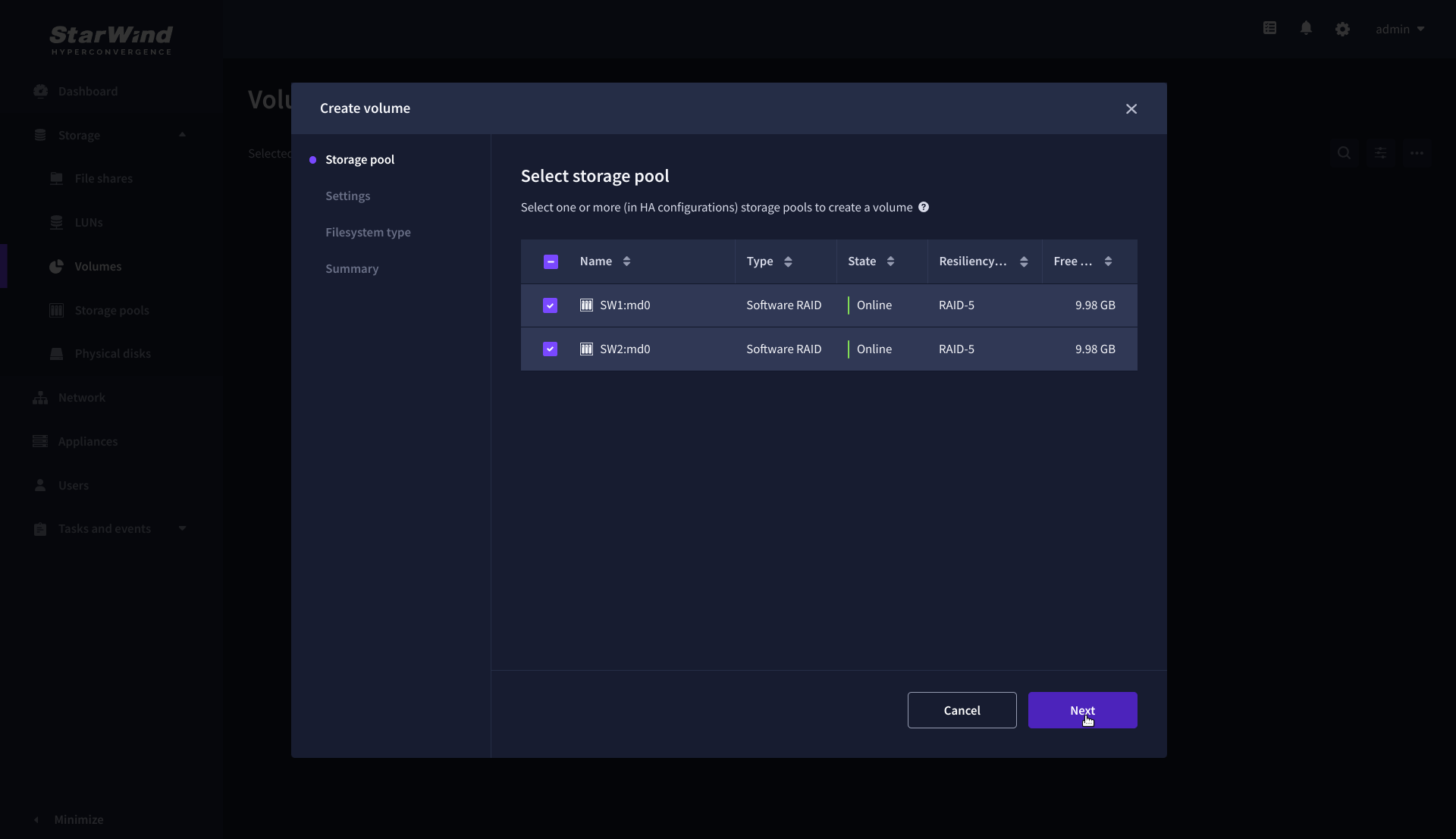

2. On the Storage pool step, select partner appliances on which to create new volumes, then click Next.

NOTE: Select 2 appliances for configuring volumes if you are deploying a two-node cluster with two-way replication, or select 3 appliances for configuring a three-node cluster with a three-way mirror.

3. On the Settings step, specify the volume name and size, then click Next.

4. On the Filesystem type step, select Standard, then click Next.

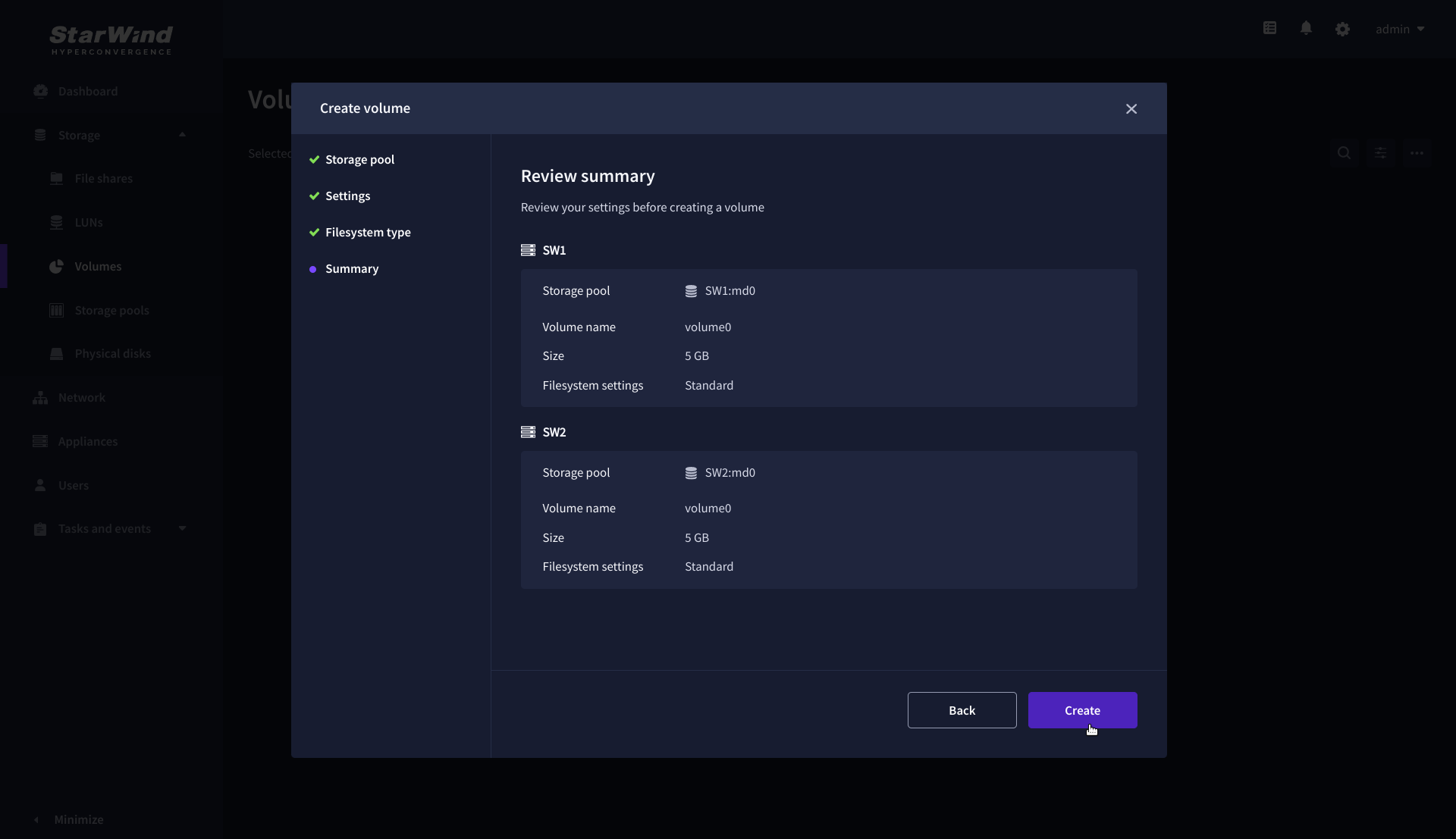

5. Review Summary and click the Create button to create the pool.

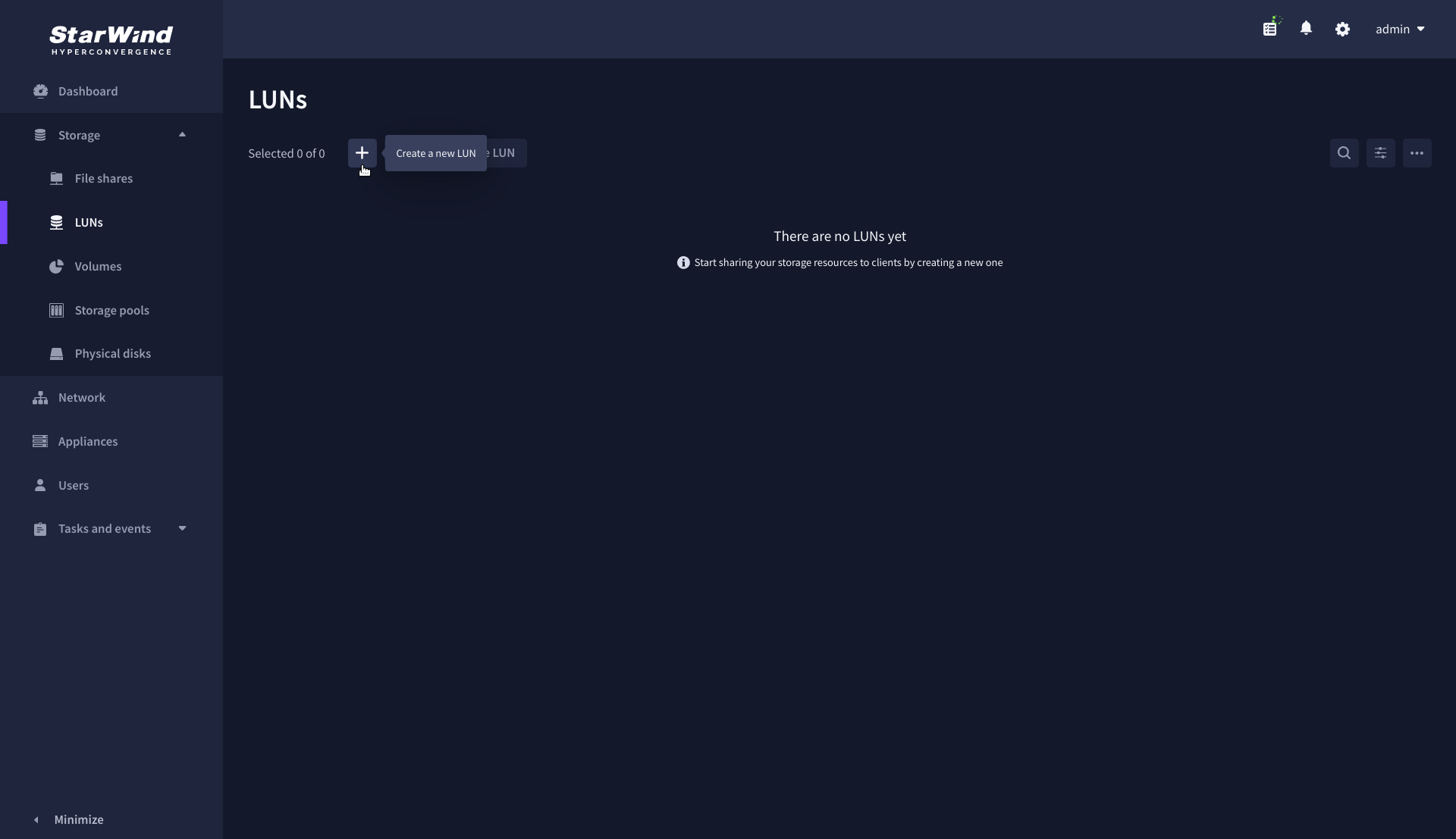

Create HA LUN using WebUI

This section describes how to create LUN in Web UI. This option is available for the setups with Commercial, Trial, and NFR licenses applied.

For setups with a Free license applied, the PowerShell script should be used to create the LUN – please follow the steps described in the section: Create StarWind HA LUNs using PowerShell

1. Navigate to the LUNs page and click the + button to open the Create LUN wizard.

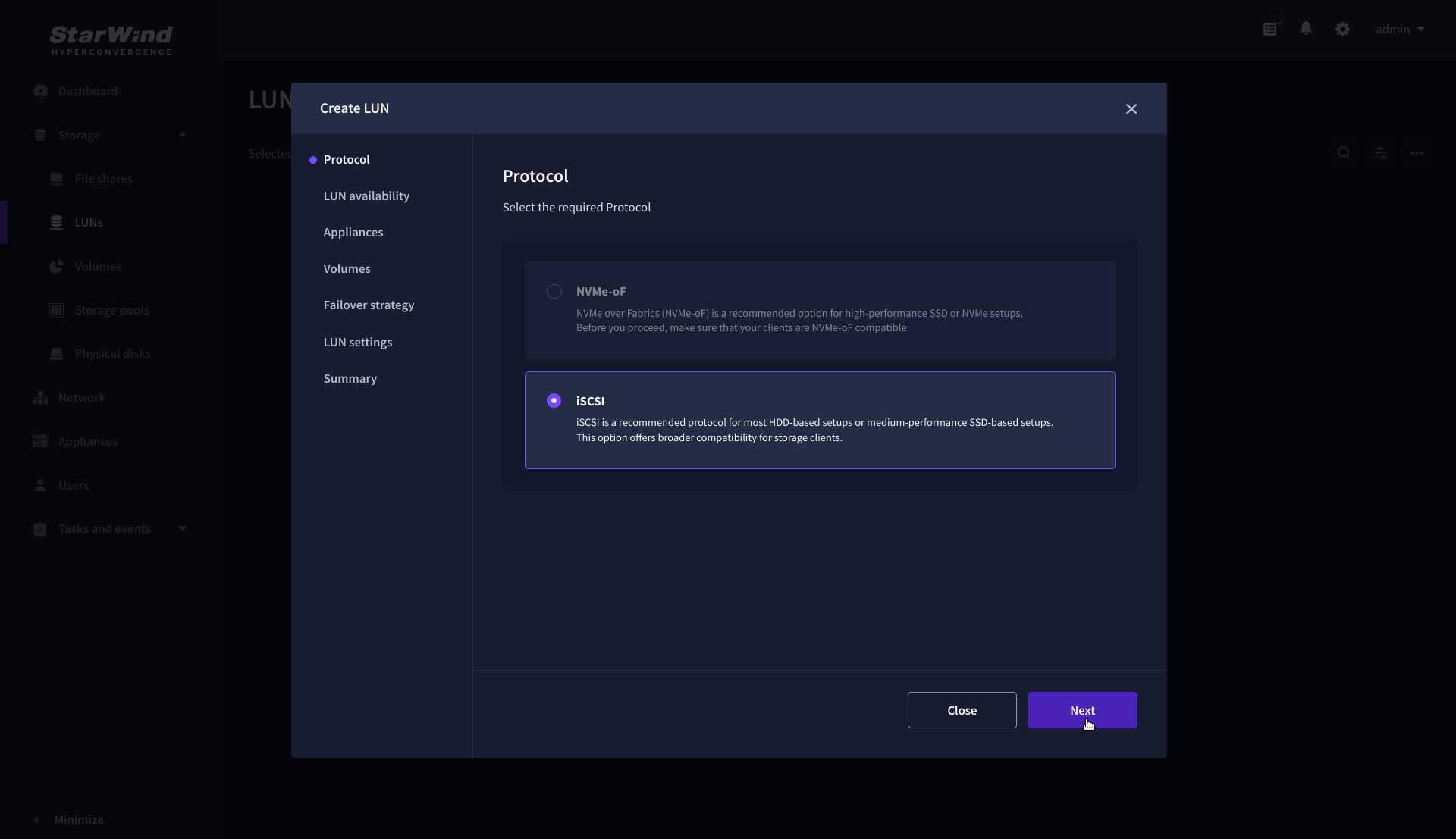

2. On the Protocols step, select the preferred storage protocol and click Next.

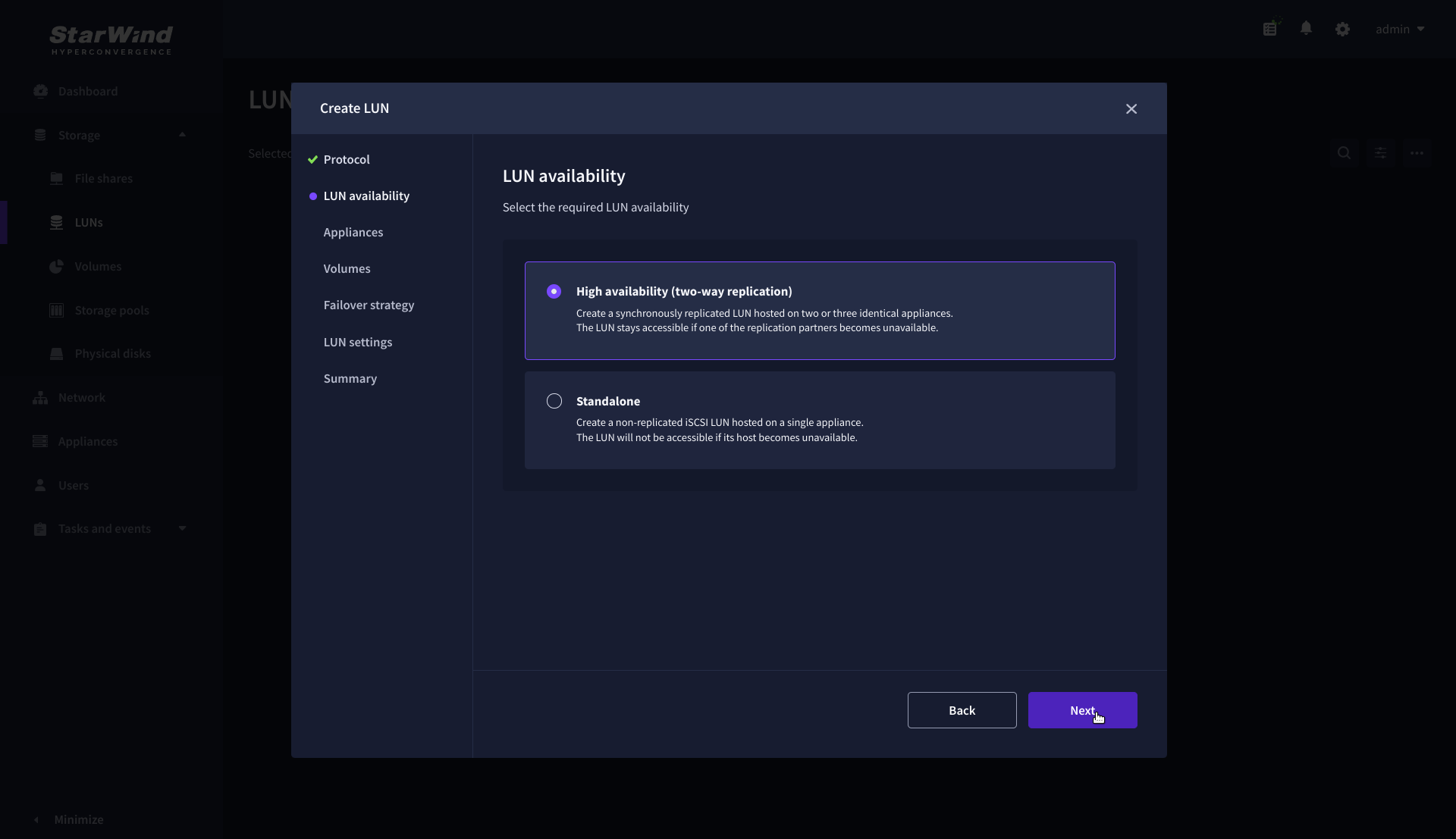

3. On the LUN availability step, select the High availability and click Next.

NOTE: The availability options for a LUN can be Standalone (without replication) or High Availability (with 2-way or 3-way replication), and are determined by the StarWind Virtual SAN license.

Below are the steps for creating a high-availability iSCSI LUN.

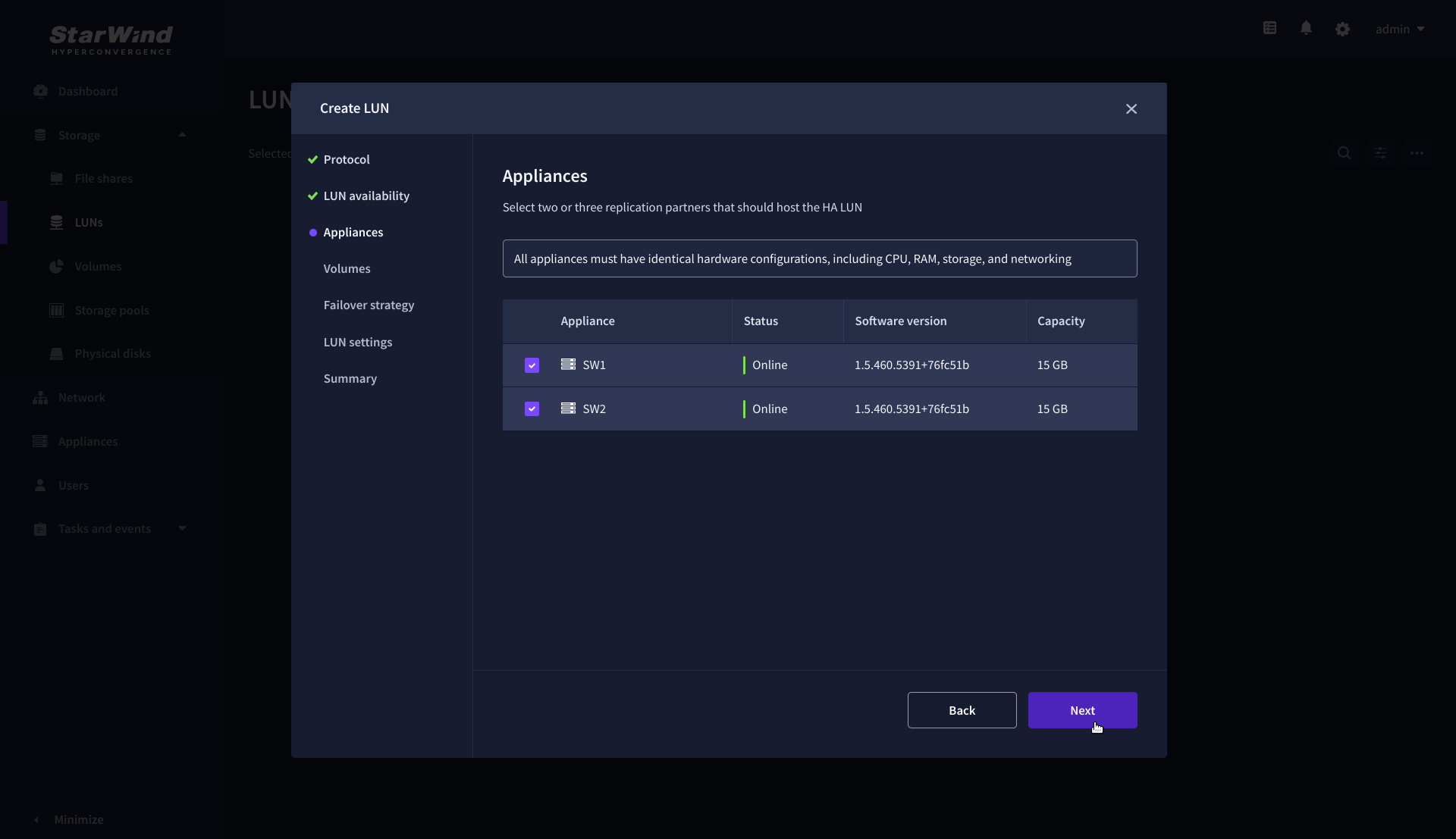

4. On the Appliances step, select partner appliances that will host new LUNs and click Next.

IMPORTANT: Selected partner appliances must have identical hardware configurations, including CPU, RAM, storage, and networking.

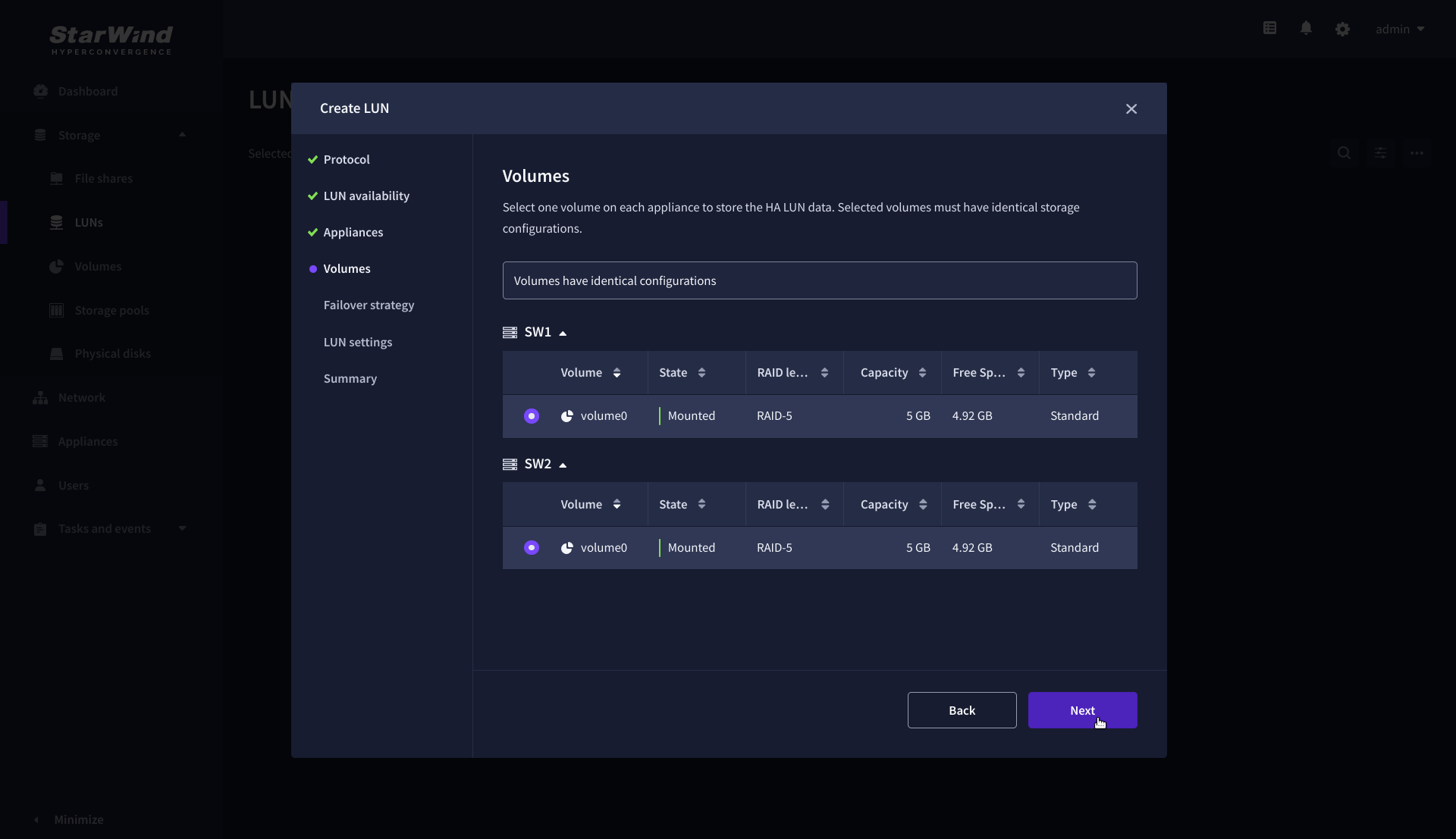

5. On the Volumes step, select the volumes for storing data on the partner appliances and click Next.

IMPORTANT: For optimal performance, the selected volumes must have identical underlying storage configurations.

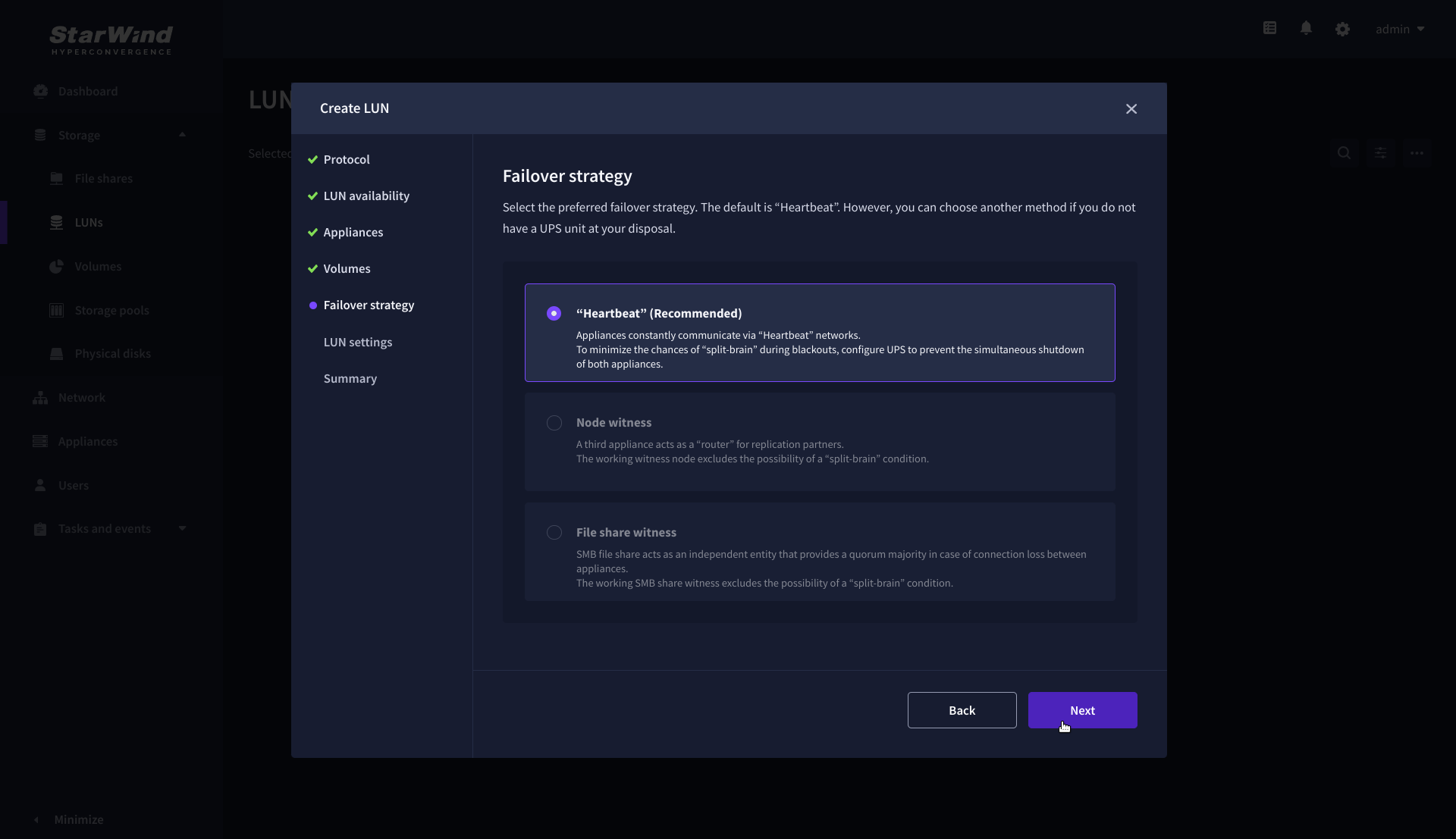

6. On the Failover strategy step, select the preferred failover strategy and click Next.

NOTE: The failover strategies for a LUN can be Heartbeat or Node Majority. In case of 2-nodes setup and None Majority failover strategy, Node witness (requires an additional third witness node), or File share witness (requires an external file share) should be configured. These options are determined by StarWind Virtual SAN license and setup configuration. Below are the steps for configuring the Heartbeat failover strategy in a two-node cluster.

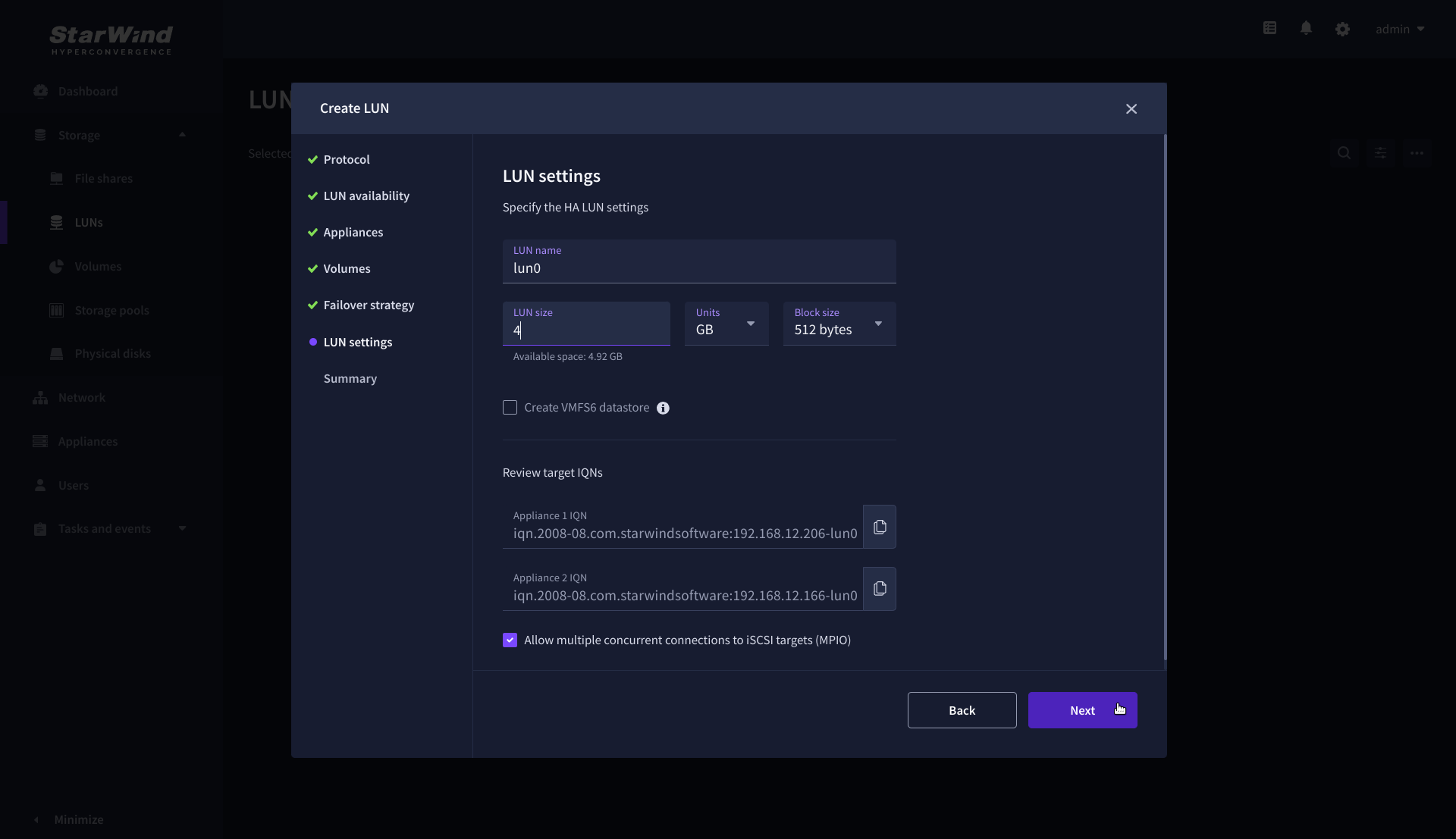

7. On the LUN settings step, specify the LUN name, size, block size, then click Next.

NOTE: For high-availability configurations, ensure that MPIO checkbox is selected.

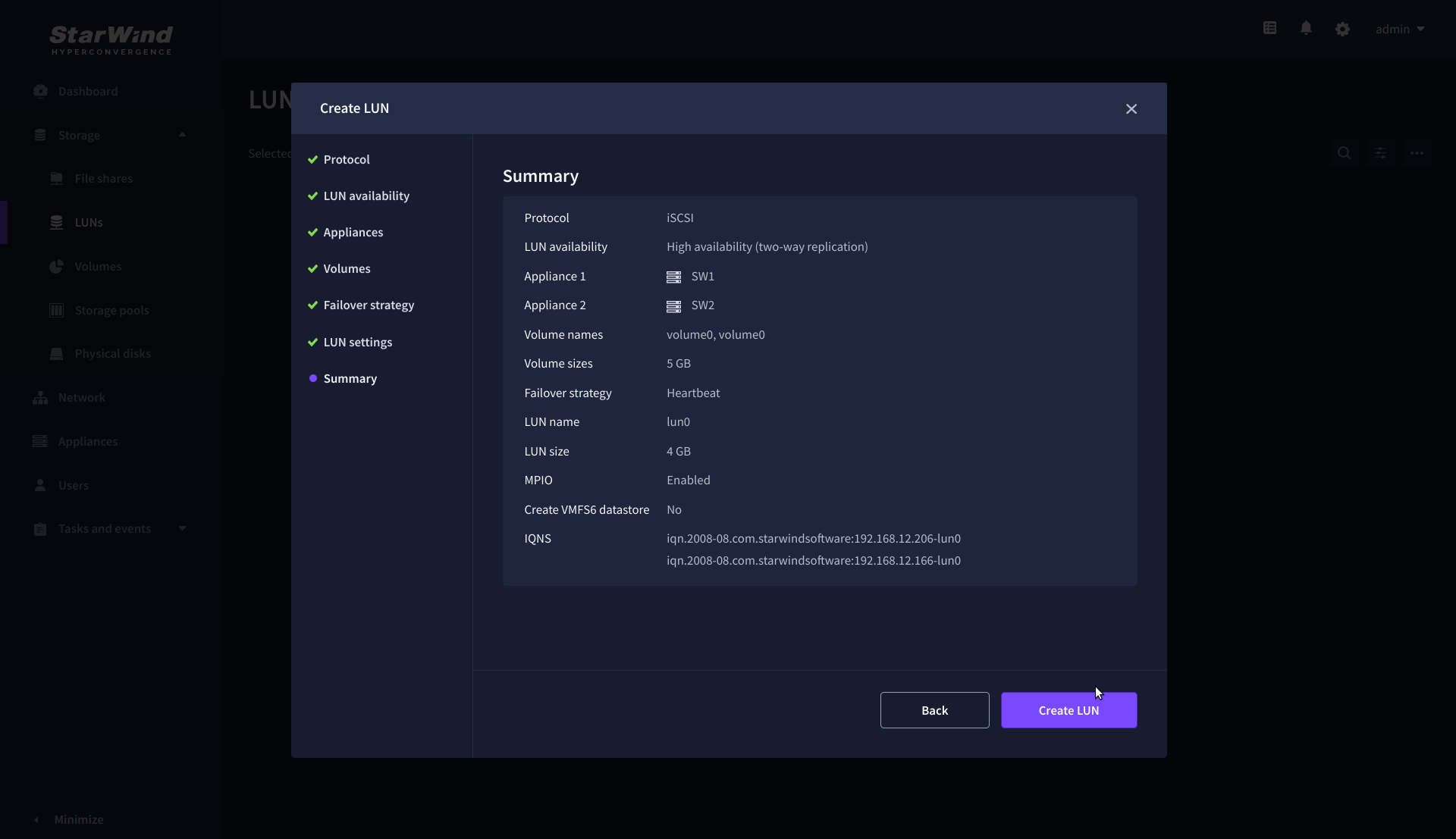

8. On the Summary step, review the LUN settings and click Create to configure new LUNs on the selected volumes.

Connecting StarWind HA Storage to Proxmox Hosts

1. Connect to Proxmox host via SSH and install multipathing tools.

|

1 |

pve# apt-get install multipath-tools |

2. Edit nano /etc/iscsi/initiatorname.iscsi setting the initiator name.

3. Edit /etc/iscsi/iscsid.conf setting the following parameters:

|

1 2 3 4 5 6 7 8 9 |

node.startup = automatic node.session.timeo.replacement_timeout = 15 node.session.scan = auto <strong>NOTE. node.startup = manual</strong> is the default parameter, it should be changed to <strong>node.startup = automatic. </strong> <strong>4. </strong>Create file<strong> /etc/multipath.conf</strong> using the following command: <pre>touch /etc/multipath.conf |

5. Edit /etc/multipath.conf adding the following content:

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 |

devices{ device{ vendor "STARWIND" product "STARWIND*" path_grouping_policy multibus path_checker "tur" failback immediate path_selector "round-robin 0" rr_min_io 3 rr_weight uniform hardware_handler "1 alua" } } defaults { polling_interval 2 path_selector "round-robin 0" path_grouping_policy multibus uid_attribute ID_SERIAL rr_min_io 100 failback immediate user_friendly_names yes } |

6. Run iSCSI discovery on both nodes:

|

1 |

pve# iscsiadm -m discovery -t st -p 10.20.1.10 pve# iscsiadm -m discovery -t st -p 10.20.1.20 |

7. Connect iSCSI LUNs:

|

1 2 3 |

pve# iscsiadm -m node -T iqn.2008-08.com.starwindsoftware:sw1-ds1 -p 10.20.1.10 -l pve# iscsiadm -m node -T iqn.2008-08.com.starwindsoftware:sw2-ds1 -p 10.20.1.20 -l |

8. Get WWID of StarWind HA device:

|

1 |

/lib/udev/scsi_id -g -u -d /dev/sda |

9. The wwid must be added to the file ‘/etc/multipath/wwids’. To do this, run the following command with the appropriate wwid:

|

1 |

multipath -a %WWID% |

10. Restart multipath service.

|

1 |

systemctl restart multipath-tools.service |

11. Check if multipathing is running correctly:

|

1 |

pve# multipath -ll |

12. Repeat steps 1-11 on every Proxmox host.

13. Create LVM PV on multipathing device:

|

1 |

pve# pvcreate /dev/mapper/mpatha |

where mpatha – alias for StarWind LUN

14. Create VG on LVM PV:

|

1 |

pve# vgcreate vg-vms /dev/mapper/mpatha |

15. Login to Proxmox via Web and go to Datacenter -> Storage. Add new LVM storage based on VG created on top of StarWind HA Device. Enable Shared checkbox. Click Add.

16. Login via SSH to all hosts and run the following command:

|

1 |

pvscan --cache |

Configure storage rescan

1. Download archive with rescan scripts.

https://tmplink.starwind.com/proxmox_rescan.zip

3. Login to StarWind CVM via SSH.

4. Install sshpass package.

|

1 2 3 |

apt udpate apt install sshpass |

5. Extract proxmox_rescan.zip archive.

6. Upload logwatcher.py and rescan_px.sh to /opt/starwind/starwind-virtual-san/drive_c/starwind/ directory.

Set host IP address and password in rescan_px.sh.

Conclusion

Following this guide, a StarWind Virtual HCI Appliance (VHCA) powered by Proxmox Virtual Environment was deployed and configured with StarWind Virtual SAN (VSAN) running in a CVM on each host. As a result, a virtual shared storage “pool” accessible by all cluster nodes was created for storing highly available virtual machines.